Sensing is a major part of the smart objects and equipment, for example, condition monitoring for predictive maintenance, which enables context awareness, production performance improvement, and results in a drastic decrease in the downtime due to preventive maintenance.

The FP-AI-MONITOR2 function pack is a multi-sensor AI data monitoring framework on the wireless industrial node, for STM32Cube. It helps to jump-start the implementation and development of sensor-monitoring-based applications designed with the X-CUBE-AI, an Expansion Package for STM32Cube, or with the NanoEdge™ AI Studio, an autoML tool for generating AI models for tiny microcontrollers. It covers the entire design of the Machine Learning cycle from the data set acquisition to the integration and deployment on a physical node.

The FP-AI-MONITOR2 runs learning and inference sessions in real-time on the SensorTile Wireless Industrial Node development kit box (STEVAL-STWINBX1), taking data from onboard sensors as input. The FP-AI-MONITOR2 implements a wired interactive CLI to configure the node and manage the learn and detect phases. For simple in the field operation, a standalone battery-operated mode is also supported, that allows basic controls through the user button, without using the console.

The STEVAL-STWINBX1 has an STM32U585AIIxQ microcontroller, which is an ultra-low power Arm® Cortex®-M33 MCU, with FPU and TrustZone at 160 MHz, 2 Mbytes of Flash memory and 786 Kbytes of SRAM. In addition, the STEVAL-STWINBX1 embeds industrial-grade sensors, including 6-axis IMU, 3-axis accelerometer and vibrometer, and analog microphones to record any inertial, vibrational and acoustic data on the field with high accuracy at high sampling frequencies.

The rest of the article discusses the following topics:

- The required hardware and software,

- Pre-requisites and setup,

- FP-AI-MONITOR2 console application,

- Running a human activity recognition (HAR) application for sensing on the device,

- Running an anomaly detection application using NanoEdge™ AI libraries on the device,

- Running an n-class classification application using NanoEdge™ AI libraries on the device,

- Running an advance dual model on the device, which combines anomaly detection using NanoEdge™ AI libraries based on vibration data and CNN based n-class classification based on ultrasound data,

- Performing the vibration and ultrasound sensor data collection using a prebuilt binary of FP-SNS-DATALOG2,

- Button operated modes, and

- Some links to useful online resources, to help the user better understand and customize the project for her/his own needs.

This article is just to serve as a quick starting guide and for full FP-AI-MONITOR2 user instructions, readers are invited to refer to FP-AI-MONITOR2 User Manual.

1. Hardware and software overview

1.1. STWIN.box - SensorTile Wireless Industrial Node Development Kit

The STWIN.box (STEVAL-STWINBX1) is a development kit and reference design that simplifies prototyping and testing of advanced industrial sensing applications in IoT contexts such as condition monitoring and predictive maintenance.

It is powered with Ultra-low-power Arm® Cortex®-M33 MCU with FPU and TrustZone at 160 MHz, 2048 kBytes Flash memory (STM32U585AI)

It is an evolution of the original STWIN kit (STEVAL-STWINKT1B) and features a higher mechanical accuracy in the measurement of vibrations, an improved robustness, an updated BoM to reflect the latest and best-in-class MCU and industrial sensors, and an easy-to-use interface for external add-ons.

The STWIN.box kit consists of an STWIN.box core system, a 480mAh LiPo battery, an adapter for the ST-LINK debugger, a plastic case, an adapter board for DIL 24 sensors and a flexible cable.

Other features:

- MicroSD card slot for standalone data logging applications

- On-board Bluetooth® low energy v5.0 wireless technology (BlueNRG-M2), Wi-Fi (EMW3080) and NFC (ST25DV04K)

- Option to implement authentication and brand protection secure solution with STSAFE-A110

- Wide range of industrial IoT sensors:

- Ultra-wide bandwidth (up to 6 kHz), low-noise, 3-axis digital vibration sensor (IIS3DWB)

- 3D accelerometer + 3D gyro iNEMO inertial measurement unit (ISM330DHCX) with Machine Learning Core

- High-performance ultra-low-power 3-axis accelerometer for industrial applications (IIS2DLPC)

- Ultra-low power 3-axis magnetometer (IIS2MDC)

- High-accuracy, high-resolution, low-power, 2-axis digital inclinometer with Embedded Machine Learning Core (IIS2ICLX)

- Dual full-scale, 1.26 bar and 4 bar, absolute digital output barometer in full-mold package (ILPS22QS)

- Low-voltage, ultra low-power, 0.5°C accuracy I²C/SMBus 3.0 temperature sensor (STTS22H)

- Industrial grade digital MEMS microphone (IMP34DT05)

- Analog MEMS microphone with frequency response up to 80 kHz (IMP23ABSU)

- Expandable via a 34-pin FPC connector

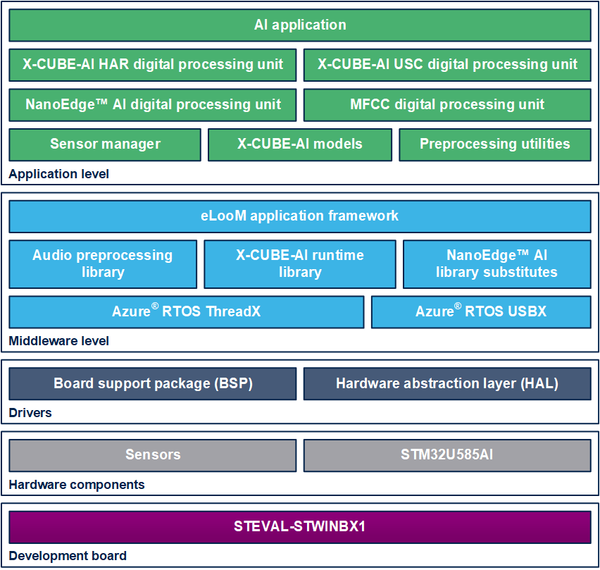

1.2. Software architecture

The top-level architecture of the FP-AI-MONITOR2 function pack is shown in the following figure.

2. Prerequisites and setup

2.1. HW prerequisites and setup

To use the FP-AI-MONITOR2 function pack on STEVAL-STWINBX1, the following hardware items are required:

- STEVAL-STWINBX1 development kit board,

- a microSD™ card and card reader to log and read the sensor data,

- Windows® powered laptop/PC,

- One USB C-type cable, to connect the sensor board to the PC

- One USB micro B cable, for the STLINK-V3MINI, and

- an STLINK-V3MINI.

2.2. Software requirements

2.2.1. FP-AI-MONITOR2

- Download the latest version of the FP-AI-MONITOR2, package from ST website, extract and copy the .zip file contents into a folder on the PC. The package contains binaries, source code and utilities for the sensor board STEVAL-STWINBX1.

2.2.2. IDE

- Install one of the following IDEs:

- STMicroelectronics STM32CubeIDE version 1.11.0 or later (tested on 1.11.0),

- IAR Embedded Workbench for Arm (EWARM) toolchain version 9.20.1 or later (tested on 9.20.1), or

- μKeil® Microcontroller Development Kit (MDK-ARM) toolchain version 5.32 or later (tested on 5.32).

2.2.3. STM32CubeProgrammer

- STM32CubeProgrammer is an all-in-one multi-OS software tool for programming STM32 products. It provides an easy-to-use and efficient environment for reading, writing, and verifying device memory through both the debug interface (JTAG and SWD) and the bootloader interface (UART, USB DFU, I2C, SPI, and CAN). STM32CubeProgrammer offers a wide range of features to program STM32 internal memories (such as Flash, RAM, and OTP) as well as external memory. Download the latest version of the STM32CubeProgrammer. The FP-AI-MONITOR2 is tested with the STM32CubeProgrammer version 2.12.0.

- This software is available from STM32CubeProg.

2.2.4. Tera Term

- Tera Term is an open-source and freely available software terminal emulator, which is used to host the CLI of the FP-AI-MONITOR2 through a serial connection.

- Users can download and install the latest version available from Tera Term website.

2.2.5. STM32CubeMX

STM32CubeMX is a graphical tool that allows a very easy configuration of STM32 microcontrollers and microprocessors, as well as the generation of the corresponding initialization C code for the Arm® Cortex®-M core or a partial Linux® Device Tree for Arm® Cortex®-A core), through a step-by-step process. Its salient features include:

- Intuitive STM32 microcontroller and microprocessor selection.

- Generation of initialization C code project, compliant with IAR™, Keil® and STM32CubeIDE (GCC compilers) for Arm®Cortex®-M core

- Development of enhanced STM32Cube Expansion Packages thanks to STM32PackCreator, and

- Integration of STM32Cube Expansion packages into the project.

FP-AI-MONITOR2 requires STM32CubeMX V 6.7.0 or later (tested on 6.7.0). To download the STM32CubeMX and obtain details of all the features please visit st.com.

2.2.6. X-CUBE-AI

X-CUBE-AI is an STM32Cube Expansion Package part of the STM32Cube.AI ecosystem and extending STM32CubeMX capabilities with automatic conversion of pre-trained Artificial Intelligence models and integration of generated optimized library into the user project. The easiest way to use it is to download it inside the STM32CubeMX tool (version 7.3.0 or newer) as described in the user manual Getting started with X-CUBE-AI Expansion Package for Artificial Intelligence (AI) (UM2526). The X-CUBE-AI Expansion Package offers also several means to validate the AI models (both Neural Network and Scikit-Learn models) both on desktop PC and STM32, as well as to measure performance on STM32 devices (Computational and memory footprints) without ad-hoc handmade user C code.

2.2.7. Python 3.7.3

Python is an interpreted high-level general-purpose programming language. Python's design philosophy emphasizes code readability with its notable use of significant indentation. Its language constructs, as well as its object-oriented approach, aim to help programmers write clear, logical code for small and large-scale projects. To build and export AI models the reader requires to set up a Python environment with a list of packages. The list of the required packages along with their versions is available as a text file in /FP-AI-MONITOR2_V1.0.0/Utilities/requirements.txt directory. The following command is used in the command terminal of the anaconda prompt or Ubuntu to install all the packages specified in the configuration file requirements.txt:

pip install -r requirements.txt

2.2.8. NanoEdge™ AI Studio

NanoEdge™ AI Studio is a new Machine Learning (ML) technology to bring true innovation easily to the end-users. In just a few steps, developers can create optimal ML libraries using a minimal amount of data, for:

- Anomaly Detection,

- Classification, and

- Extrapolation.

This function pack supports only the Anomaly Detection and n-Class Classification libraries generated by NanoEdge™ AI Studio .

FP-AI-MONITOR2 is tested using NanoEdge™ AI Studio V3.2.0.

NanoEdge™ AI Studio is a new Machine Learning (ML) technology to bring true innovation easily to the end-users. In just a few steps, developers can create optimal ML libraries for Anomaly Detection, 1-class classification, n-class classification, and extrapolation, based on a minimal amount of data.

2.3. Installing the function pack

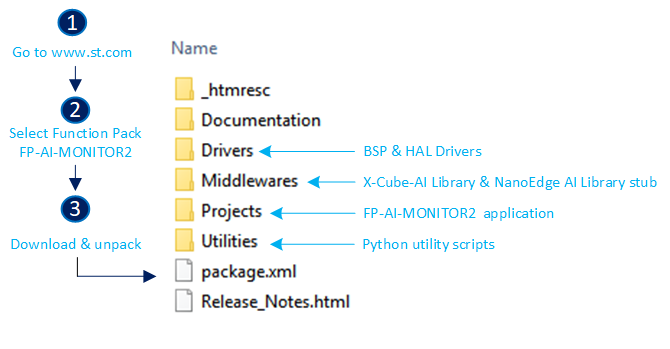

2.3.1. Getting the function pack

The first step is to get the function pack FP-AI-MONITOR2 from ST website. Once the pack is downloaded, unpack/unzip it and copy the content to a folder on the PC. The steps of the process along with the content of the folder are shown in the following image.

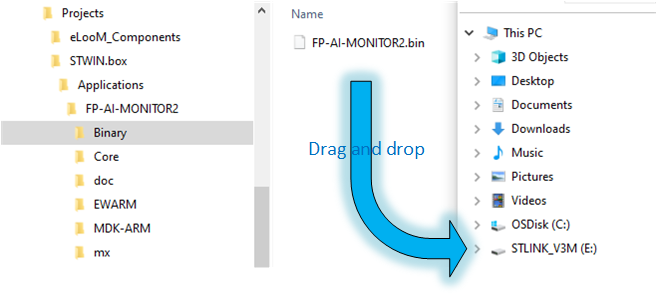

2.3.2. Flashing the application on the sensor board

This section explains how to select a binary file for the firmware and program it into the STM32 microcontroller. A precompiled binary file is delivered as part of the FP-AI-MONITOR2 function pack. It is located in the FP-AI-MONITOR2_V1.0.0\Projects\STWIN.box\Applications\FP-AI-MONITOR2\Binary\ folder. When the STM32 board and PC are connected through the USB cable on the STLINK-V3E connector, the STEVAL-STWINBX1 appears as a drive on the PC. The selected binary file for the firmware can be installed on the STM32 board by simply performing a drag and drop operation as shown in the figure below. This creates a dialog to copy the file and once it is disappeared (without any error) this indicates that the firmware is programmed in the STM32 microcontroller.

3. FP-AI-MONITOR2 console application

FP-AI-MONITOR2 provides an interactive command-line interface (CLI) application. This CLI application equips a user with the ability to configure and control the sensor node, and to perform different AI operations on the edge including, learning and anomaly detection (for NanoEdge™ AI libraries), n-Class classification (NanoEdge™ AI libraries), dual (combination of NanoEdge AI detection and CNN based classification), and human activity recognition using CNN. The following sections provide a small guide on how to install this CLI application on a sensor board and control it through the serial connection from TeraTerm.

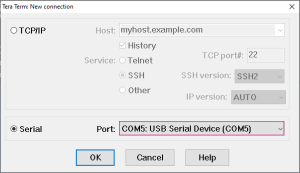

3.1. Setting up the console

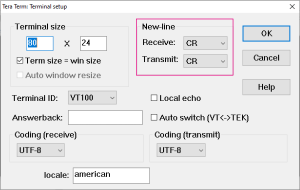

Once the sensor board is programmed with the binary of the project, the next step is to set up the serial connection of the board with the PC through TeraTerm. To do so, start TeraTerm, select the proper connection, featuring the [USB Serial Device]. For the screenshot below this is COM5 but it could vary for different users.

Set the Terminal parameters:

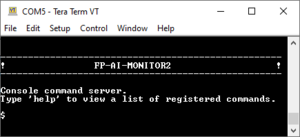

Restart the board by pressing the RESET button. The following welcome screen is displayed on the terminal.

From this point, start entering the commands directly or type help to get the list of available commands along with their usage guidelines.

3.2. Configuring the sensors

Through the CLI interface, a user can configure the supported sensors for sensing and condition monitoring applications. The list of all the supported sensors can be displayed on the CLI console by entering the command sensor_info. This command prints the list of the supported sensors along with their ids as shown in the image below. The user can configure these sensors using these ids. The configurable options for these sensors include:

- enable: to activate or deactivate the sensor,

- ODR: to set the output data rate of the sensor from the list of available options, and

- FS: to set the full-scale range from the list of available options.

The current value of any of the parameters for a given sensor can be printed using the command,

$ sensor_get <sensor_id> <param>

or all the information about the sensor can be printed using the command:

$ sensor_get <sensor_id> all

Similarly, the values for any of the available configurable parameters can be set through the command:

$ sensor_set <sensor_id> <param> <val>

The snippet below shows the complete example of getting and setting these values along with old and changed values.

$ sensor_info imp34dt05 ID=0 , type=MIC iis2iclx ID=1 , type=ACC stts22h ID=2 , type=TEMP ilps22qs ID=3 , type=PRESS iis2dlpc ID=4 , type=ACC imp23absu ID=5 , type=MIC iis2mdc ID=6 , type=MAG ism330dhcx ID=7 , type=ACC ism330dhcx ID=8 , type=GYRO ism330dhcx ID=9 , type=MLC iis3dwb ID=10, type=ACC ------- 11 sensors supported $ sensor_get 10 all enable = false nominal ODR = 26667.00 Hz, latest measured ODR = 0.00 Hz Availabe ODRs: 26667.00 Hz fullScale = 16.00 g Available fullScales: 2.00 g 4.00 g 8.00 g 16.00 g $ sensor_set 10 enable 1 sensor 10: enable $ sensor_set 10 FS 4 sensor FS: 4.00 $ sensor_get 10 all enable = true nominal ODR = 26667.00 Hz, latest measured ODR = 0.00 Hz Availabe ODRs: 26667.00 Hz fullScale = 4.00 g Available fullScales: 2.00 g 4.00 g 8.00 g 16.00 g

4. Inertial data classification with STM32Cube.AI

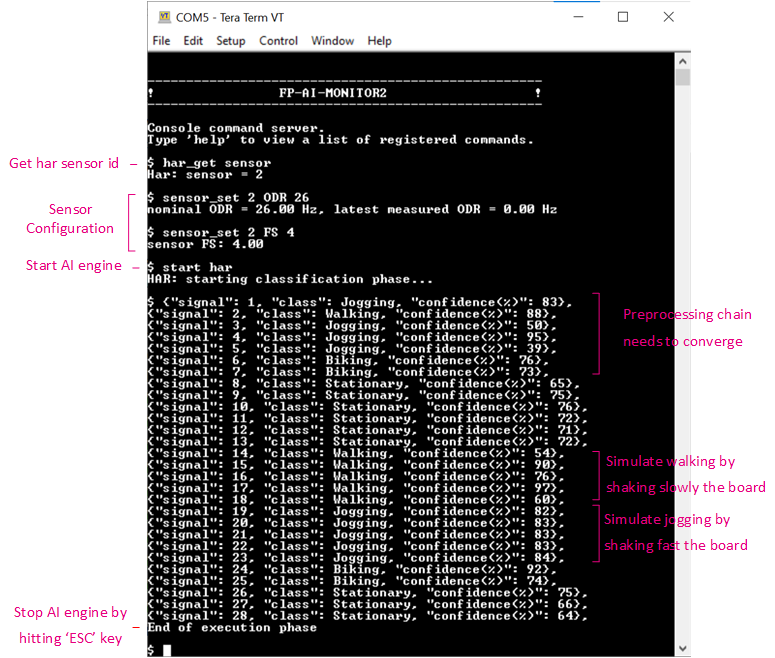

The CLI application comes with a prebuilt Human Activity Recognition model. This functionality is started by typing the command:

$ start har

Note that the provided HAR model is built with a dataset created using the IHM330DHCX_ACC sensor with ODR = 26, and FS = 4. To achieve the best performance, the user must set these parameters to the sensor configuration using the sensor_set command as provided in the Command Summary table.

Running the $ start har command starts doing the inference on the accelerometer data and predicts the performed activity along with the confidence. The supported activities are:

- Stationary,

- Walking,

- Jogging, and

- Biking.

The following figure is a screenshot of the normal working session of the har command in the CLI application.

5. Anomaly detection with NanoEdge™ AI library

FP-AI-MONITOR2 includes a pre-integrated stub which is easily replaced by an AI condition monitoring library generated and provided by NanoEdge™ AI Studio. This stub simulates the NanoEdge™ AI-related functionalities, such as running learning and detection phases on the edge.

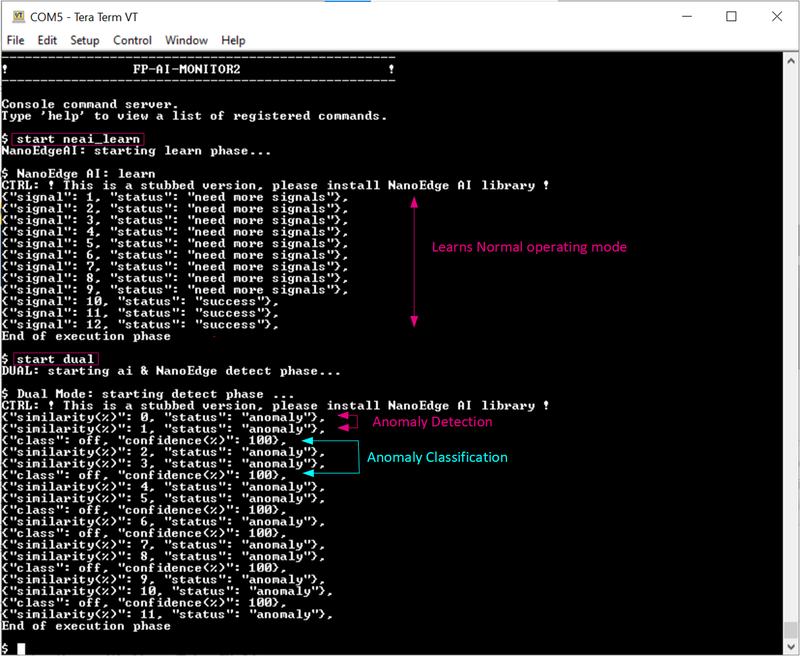

The learning phase is started by issuing a command $ start neai_learn from the CLI console or by long-pressing the [USR] button. The learning process is reported either by slowly blinking the green LED light on STEVAL-STWINBX1 or in the CLI as shown below:

$ NanoEdge AI: learn

CTRL:! This is a stubbed version, please install the NanoEdge AI library!

{"signal": 1, "status": "need more signals"},

{"signal": 2, "status": "need more signals"},

:

:

{"signal": 10, "status": success}

{"signal": 11, "status": success}

:

:

End of execution phase

The CLI shows that the learning is being performed and at every signal learned. The NanoEdge AI library requires to learn for at least ten samples, so for all the samples until ninth sample a status message saying 'need more signals' is printed along with the signal id. Once, ten signals are learned the status of 'success' is printed. The learning can be stopped by pressing the ESC key on the keyboard or simply by pressing the [USR] button.

Similarly, the user starts the condition monitoring process by issuing the command $ start neai_detect. This starts the inference phase. The anomaly detect phase checks the similarity of the presented signal with the learned normal signals. If the similarity is less than the set threshold default: 90%, a message is printed in the CLI showing the occurrence of an anomaly along with the similarity of the anomaly signal.

The process is stopped by pressing the ESC key on the keyboard or pressing the [USR] button.

This behavior is shown in the snippet below:

$ start neai_detect

NanoEdgeAI: starting detect phase...

$ NanoEdge AI: detect

CTRL: ! This is a stubbed version, please install NanoEdge AI library !

{"signal": 1, "similarity": 0, "status": anomaly},

{"signal": 2, "similarity": 1, "status": anomaly},

{"signal": 3, "similarity": 2, "status": anomaly},

:

:

{"signal": 90, "similarity": 89, "status": anomaly},

{"signal": 91, "similarity": 90, "status": anomaly},

{"signal": 102, "similarity": 0, "status": anomaly},

{"signal": 103, "similarity": 1, "status": anomaly},

{"signal": 104, "similarity": 2, "status": anomaly},

End of execution phase

Other than CLI, the status is also presented using the LED lights on the STEVAL-STWINBX1. Fast blinking green LED light shows the detection is in progress. Whenever an anomaly is detected, the orange LED light is blinked twice to report an anomaly. If not enough signals (at least 10) are learned, a message saying "need more signals" with a similarity value equals to 0 appears.

NOTE : This behavior is simulated using a STUB library where the similarity starts from 0 when the detection phase is started and increments with the signal count. Once the similarity is reached to 100 it resets to 0. One can see that the anomalies are not reported when similarity is between 90 and 100.

5.1. Additional parameters in condition monitoring

For user convenience, the CLI application also provides handy options to easily fine-tune the inference and learning processes. The list of all the configurable variables is available by issuing the following command:

$ neai_get all signals = 0 sensitivity = 1.00 threshold = 90 timer = 0 ms sensor = 7

Each of the these parameters is configurable using the neai_set <param> <val> command.

This section provides information on how to use these parameters to control the learning and detection phase. By setting the "signals" and "timer" parameters, the user can control how many signals or for how long the learning and detection is performed (if both parameters are set the learning or detection phase stops whenever the first condition is met). For example, to learn 10 signals, the user issues this command, before starting the learning phase as shown below.

$ neai_set signals 10

signals set to 10

$ start neai_learn

NanoEdgeAI: starting learn phase...

$ NanoEdge AI: learn

CTRL: ! This is a stubbed version, please install NanoEdge AI library !

{"signal": 1, "status": "need more signals"},

{"signal": 2, "status": "need more signals"},

...

{"signal": 9, "status": "need more signals"},

{"signal": 10, "status": "success"},

End of execution phase

If both of these parameters are set to "0" (default value), the learning and detection phases run indefinitely.

The threshold parameter is used to report any anomalies. For any signal which has similarities below the threshold value, an anomaly is reported. The default threshold value used in the CLI application is 90. Users can change this value by using neai_set threshold <val> command.

Finally, the sensitivity parameter is used as an emphasis parameter. The default value is set to 1. Increasing this sensitivity means that the signal matching is to be performed more strictly, reducing it relaxes the similarity calculation process, meaning resulting in higher matching values.

For further details on how NanoEdge™ AI libraries work users are invited to read the detailed documentation of NanoEdge™ AI Studio.

6. n-class classification with NanoEdge™ AI

This section provides an overview of the classification application provided in FP-AI-MONITOR2 based on the NanoEdge™ AI classification library. FP-AI-MONITOR2 includes a pre-integrated stub which is easily replaced by an AI classification library generated using NanoEdge™ AI Studio. This stub simulates the NanoEdge™ AI-classification-related functionality, such as running the classification by simply iterating between two classes for ten consecutive signals on the edge.

Unlike the anomaly detection library, the classification library from the NanoEdge™ AI Studio comes with static knowledge of the data and does not require any learning on the device. This library contains the functions based on the provided sample data to best classify one class from another and rightfully assign a label to it when performing the detection on the edge. The classification application powered by NanoEdge™ AI can be simply started by issuing a command $ start neai_class as shown in the snippet below.

$ start neai_class

NanoEdgeAI: starting classification phase...

$ CTRL: ! This is a stubbed version, please install the NanoEdge AI library!

NanoEdge AI: classification

{"signal": 1, "class": Class1}

{"signal": 2, "class": Class1}

:

:

{"signal": 10, "class": Class1}

{"signal": 11, "class": Class2}

{"signal": 12, "class": Class2}

:

:

{"signal": 20, "class": Class2}

{"signal": 21, "class": Class1}

:

:

End of execution phase

The CLI shows that for the first ten samples the class is detected as "Class1" while for the upcoming ten samples "Class2" is detected as the current class. The classification phase can be stopped by pressing the ESC key on the keyboard or simply by pressing the [USR] button.

NOTE : This behavior is simulated using a STUB library where the classes are iterated by displaying ten consecutive labels for one class and then ten labels for the next class and so on.

7. Dual-mode application with STM32Cube.AI and NanoEdge™ AI

In addition to the three applications described in the sections above the FP-AI-MONITOR2 also provides an advanced execution phase which we call the dual application mode. This mode uses anomaly detection based on the NanoEdge™ AI library and performs classification using a prebuilt ANN model based on an analog microphone. The dual-mode works in a power saver configuration. A low power anomaly detection algorithm based on the NanoEdge™ AI library is always running based on vibration data and an ANN classification based on a high-frequency analog microphone pipeline is only triggered if an anomaly is detected. Other than this both applications are independent of each other. This is also worth mentioning that the dual-mode is created to work for a USB fan when the fan is running at the maximum speed and does not work very well when tested on other speeds. The working of the applications is very simple.

To start testing the dual application execution phase, the user first needs to train the anomaly detection library using the $ start neai_learn at the highest speeds of the fan. Once the normal conditions have been learned the user can start the dual application by issuing a simple command as $ start dual as shown in the below snippet:

Whenever there is an anomaly detected, meaning a signal with a similarity of less than 90%, the ultrasound-based classifier is started. Both applications run in asynchronous mode. The ultrasound-based classification model takes almost one second of data, then preprocesses it using the mel-frequency cepstral coefficients (MFCC), and then feeds it to a pre-trained neural network. It then prints the label of the class along with the confidence. The network is trained for four classes [ 'Off', 'Normal', 'Clogging', 'Friction' ] to detect fan in 'Off' condition, or running in 'Normal' condition at max speed or clogged and running in 'Clogging' condition at maximum speed or finally if there is friction being applied on the rotating axis it is labeled as 'Friction' class. As soon as the anomaly detection detects the class to be normal, the ultrasound-based ANN is suspended.

8. Updating the AI models

8.1. Anomaly detection with NanoEdge™ AI

Once the libraries are generated and downloaded from NanoEdge™ AI Studio, the next step is to link these libraries to FP-AI-MONITOR2 and run them on the STWIN.Box. The FP-AI-MONITOR2, comes with the library stubs in the place of the actual libraries generated by NanoEdge™ AI Studio. This is done to simplify the linking of the generated libraries. In order to link the actual libraries, the user needs to copy the generated libraries and replace the existing stub/dummy libraries and header files NanoEdgeAI.h, and libneai.a files present in the folders Inc, and lib, respectively. The relative paths of these folders are /FP-AI-MONITOR2_V1.0.0/Middlewares/ST/NanoEdge_AI_Library/ .

Once these files are copied, the project must be rebuilt and programmed on the sensor board to link the libraries correctly. For this, the user must open the project file in STM32CubeIDE located in the /FP-AI-MONITOR2_V1.0.0/Projects/STWIN.box/Applications/FP-AI-MONITOR2/STM32CubeIDE/ folder and double click .project file .

To build and install the project click on the play button and wait for the successful download message as shown in the section Build and Install Project.

Once the sensor board is successfully programmed, the welcome message appears in the CLI (Tera Term terminal). If the message does not appear try to reset the board by pressing the RESET button.

8.1.1. Testing the anomaly detection with NanoEdge™ AI

Once the STWIN is programmed with the FW containing a valid library, the condition monitoring libraries are ready to be tested on the sensor board. The learning and detection commands can be issued and now the user does not see the warning of the stub presence.

To achieve the best performance, the user must perform the learning using the same sensor configurations which were used during the contextual data acquisition. For example in the snippet below users can see commands to configure ISM330DHCX sensor with sensor_id 2 with following parameters:

- enable = 1

- ODR = 1666,

- FS = 4.

$ sensor_set 2 enable 1 sensor 2: enable $ sensor_set 2 ODR 1666 nominal ODR = 1666.00 Hz, latest measured ODR = 0.00 Hz $ sensor_set 2 FS 4 sensor FS: 4.00

Also, to get stable results in the neai_detect phase the user must perform learning for all the normal conditions to get stable results before starting the detection. In the case of running the detection phase without performing the learn first, erratic results are displayed. An indication of minimum signals to be used for learning is provided when a library is generated in the NanoEdge™ AI Studio in Step 4 of library generation.

8.2. n-class classification with NanoEdge™ AI

The FP-AI-MONITOR2, comes with the library stubs in the place of the actual libraries generated by NanoEdge™ AI Studio. This is done to simplify the linking of the generated libraries. In n-Class classification library the features for the classes are learned and required in the form of the knowledge.h file in addition to .h and .a files. So unlike the anomaly detection library, in order to link the classification libraries, the user needs to copy two header files NanoEdgeAI_ncc.h and knowledge_ncc.h, and the library libneai_ncc.a file to replace the already present files in the folders Inc, and lib, respectively. The relative paths of these folders are /FP-AI-MONITOR2_V1.0.0/Middlewares/ST/NanoEdge_AI_Library/.

In addition to this the user is required to add the labels of the classes to expect during classification process by updating the sNccClassLabels array in FP-AI-MONITOR2_prj/Application/Src/AppController.c file:

/**

* Specifies the label for the two classes of the NEIA Class demo.

*/

static const char* sNccClassLabels[] = {

"Unknown",

"Off",

"Normal",

"Clogging",

"Friction"

};

Note that the first label is "Unknown" and it is required to be left as it is. The real labels for the classification come after this. The order of the labels [Off, Normal, Clogging, Friction] has to be the same as was used to provide the data in the process of library generation in NanoEdge™ AI Studio.

After this step, the project must be rebuilt and programmed on the sensor board to link the libraries correctly. To build and install the project click on the play button and wait for the successful download message as shown in the section Build and Install Project.

Once the sensor board is successfully programmed, the welcome message appears in the CLI (Tera Term terminal). If the message does not appear try to reset the board by pressing the RESET button.

8.2.1. Testing the n-class classification with NanoEdge™ AI

Once the STWIN.Box is programmed with the FW containing a valid n-class classification library, it is ready to be tested.

To achieve the best performance, the user must perform the datalogging and testing in the same sensor configurations. For example in the snippet below users can see commands to configure ISM330DHCX sensor with sensor_id 2 with following parameters:

- enable = 1

- ODR = 1666,

- FS = 4.

$ sensor_set 2 enable 1 sensor 2: enable $ sensor_set 2 ODR 1666 nominal ODR = 1666.00 Hz, latest measured ODR = 0.00 Hz $ sensor_set 2 FS 4 sensor FS: 4.00

After the configurations of the sensor are completed, the user can start the NEAI classification by issuing the command, $ start neai_class.

8.3. X-CUBE-AI models

The CLI example has two prebuilt models integrated with it. One for HAR and another for the USC. Following the steps below the user can update these models. As described in the code generation process, for sake of simplicity, the code for both models is generated at once. In this example the provided code for the combination of SVC and USC models(USC_4_Class_+_SVC_4_Class) is replaced with the prebuilt and converted C code from the HAR (CNN) and USC CNN models provided in the /FP-AI-MONITOR2_V1.0.0/Utilities/AI_Resources/models/USC_4_Class_+_IGN_4_Class/ folder.

To update the model, the user must copy and replace the following files in the /FP-AI-MONITOR2_V1.0.0/Projects/STWIN.box/Applications/FP-AI-MONITOR2/X-CUBE-AI/App/ folder:

- app_x-cube-ai.c,

- app_x-cube-ai.h,

- har_network.c,

- har_network.h,

- har_network_config.h,

- har_network_data.h,

- har_network_data.c,

- har_network_generate_report.txt,

- usc_network.c,

- usc_network.h,

- usc_network_config.h,

- usc_network_data.h,

- usc_network_data.c, and

- usc_network_generate_report.txt.

After the files are copied, the user must open the project with the CubeIDE. To do so, go to the /FP-AI-MONITOR2_V1.0.0/Projects/STWIN.box/Applications/FP-AI-MONITOR2/STM32CubeIDE/ folder and double click .project file. Once the project is opened, go to FP-AI-MONITOR2_V1.0.0/Projects/STWIN.box/Application/FP-AI-MONITOR2/X-CUBE-AI/App/app_x-cube-ai.c file and comment the following lines of the code as shown below:

#include "app_x-cube-ai.h"

//#include "bsp_ai.h"

//#include "aiSystemPerformance.h"

#include "ai_datatypes_defines.h"

/* USER CODE BEGIN includes */

/* USER CODE END includes */

/* IO buffers ----------------------------------------------------------------*/

//DEF_DATA_IN

//

//DEF_DATA_OUT

/* Activations buffers -------------------------------------------------------*/

AI_ALIGNED(32)

static uint8_t pool0[AI_USC_NETWORK_DATA_ACTIVATION_1_SIZE];

ai_handle data_activations0[] = {pool0};

ai_handle data_activations1[] = {pool0};

/* Entry points --------------------------------------------------------------*/

//void MX_X_CUBE_AI_Init(void)

//{

// MX_UARTx_Init();

// aiSystemPerformanceInit();

// /* USER CODE BEGIN 5 */

// /* USER CODE END 5 */

//}

//

//void MX_X_CUBE_AI_Process(void)

//{

// aiSystemPerformanceProcess();

// HAL_Delay(1000); /* delay 1s */

// /* USER CODE BEGIN 6 */

// /* USER CODE END 6 */

//}

8.3.1. Building and installing the project

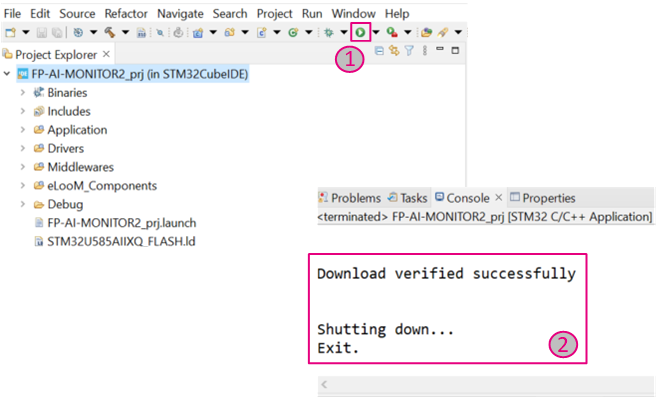

Then build and install the project on the STWIN sensor board by pressing the play button as shown in the figure below.

A message saying Download verified successfully indicates the new firmware is programmed in the sensor board.

9. FP-AI-MONITOR2 Utilities

For the ease of the users FP-AI-MONITOR2 comes with a set of utilities to record and prepare the datasets, and generate the AI models for different supported AI applications. These utilities are in the FP-AI-MONITOR2_V1.0.0/Utilities/ directory and contain two sub-directories,

AI_Resources(all the Python resources related to AI), andDataLog(binary and helper scripts for High-Speed Datalogger).

This following section briefly describes the contents of the AI_Resources folder.

9.1. AI_Resources

FP-AI-MONITOR2 comes equipped with four AI applications, and AI_Resources contains the Python™ scripts, the sample datasets, dataset placeholders, and the code to prepare these datasets for generating the AI models supported in this function pack.

In the AI_Resources directory, there are following subdirectories:

- Dataset: Contains different datasets/holders for the datasets, used in the function pack

- AST, a small subsample from an ST proprietary dataset for HAR.

- HSD_Logged_Data, different datasets logged using High-speed Datalogger binary.

- Fan12CM, Datasets logged on 12CM USB fan using different on-board sensors of STEVAL-STWINBX1.

- HAR, Human activity recognition dataset acquired using the STEVAL-STWINBX1.

- WISDM, a placeholder for downloading and placing the WISDM dataset for HAR.

- models: Contains the pre-generated and trained models for HAR and USC along with their C-code

- USC_4_Class_+_IGN_4_Class contains a combination of CNN for HAR and CNN for USC.

- USC_4_Class_+_SVC_4_Class contains a combination of SVC for HAR and CNN for USC.

- NanoEdgeAi: Contains the helper scripts to prepare the data for the NanoEdge™ AI Studio from the HSDatalogs to generate the anomaly detection libraries.

- signal_processing_lib: Contains the code for various signal processing modules (there is equivalent embedded code available for all the modules in the function pack)

- Training Scripts: Contains the Python scripts for HAR and AnomalyClassification.

- HAR subdirectory at the path

FP-AI-MONITOR2_V1.0.0/Utilities/AI_Resources/Training Scripts/HAR/contains three Jupyter notebooks:- HAR_with_CNN.ipynb (a complete step-by-step code to build a sample HAR model based on Convolutional Neural Networks),

- HAR_with_SVC.ipynb (a complete step-by-step code to build a sample HAR model based on Support Vector Machine Classifier), and

- HAR_with_NEAI.ipynb (a complete step-by-step code to build NanoEdge™ AI Studio compliant files for all activities to generate n-Class classification libraries).

- AnomalyClassification contains two subdirectories for two different usecases to perform anomaly classification.

- Ultrasound directory contains the Python scripts to prepare the dataset and create a CNN based model for the anomaly classification using the ultrasound data logged through IMP23ABSU sensor (an analog microphone).

- NanoEdgeAI_ACC contains the python code to prepare the .csv files for ACC data for different conditions on the fan for the anomaly classification library generation from NanoEdge™ AI Studio.

- HAR subdirectory at the path

10. Data collection

The data collection functionality is out of the scope of this function pack, however, to simplify this for the users this section provides the step-by-step guide to logging the data on STEVAL-STWINBX1 using the high-speed data logger.

Step 1: Program the STEVAL-STWINBX1 with the HSDatalog FW

In the scope of this function pack and article, the STEVAL-STWINBX1 can be programmed with HSDatalog firmware using the binary provided in the function pack.

To simplify the task of the users and to allow them the possibility to perform a data log, the precompiled HSDatalog.bin file for FP-SNS-DATALOG2 is provided in the Utilities directory, which is located under path /FP-AI-MONITOR2_V1.0.0/Utilities/Datalog/. The sensor tile can be programmed by simply following the drag-and-drop action shown in section 2.3.

Step 2: Place a DeviceConfig.json file on the SD card

The next step is to copy a DeviceConfig.json on the SD card. This file contains the sensors configurations to be used for the data logging.

The users can simply use one of the provided sample .json files in the package in FP-AI-MONITOR2_V1.0.0/Utilities/Datalog/STWIN_config_examples/ directory.

Note : The configuration files are to be precisely named DeviceConfig.json or the process does not work.

Step 3: Insert the SD card into the STWIN board

Insert an SD card in the STEVAL-STWINBX1.

Note : For data logging using the high speed datalogger user needs a FAT32-FS formatted microSDTM card.

Step 4: Reset the board

Reset the board. Orange LED blinks once per second. The custom sensor configurations provided in DeviceConfig.json are loaded from the file.

Step 5: Start the data log

Press the [USR] button to start data acquisition on the SD card. The orange LED turns off and the green LED starts blinking to signal sensor data is being written into the SD card.

Step 6: Stop the data logging

Press the [USR] button again to stop data acquisition. Do not unplug the SD card, turn the board off or perform a [RESET] before stopping the acquisition otherwise the data on the SD card are corrupted.

Step 7: Retrieve data from SD card

Remove the SD card and insert it into an appropriate SD card slot on the PC. The log files are stored in STWIN_### folders for every acquisition, where ### is a sequential number determined by the application to ensure log file names are unique. Each folder contains a file for each active sub-sensor called SensorName_subSensorName.dat containing raw sensor data coupled with timestamps, a DeviceConfig.json with specific information about the device configuration (confirm if the sensor configurations in the DeviceConfig.json are the ones you desired), necessary for correct data interpretation, and an AcquisitionInfo.json with information about the acquisition.

10.1. DeviceConfig.json file

The DeviceConfig.json file contains the configurations of all the onboard sensors of STEVAL-STWINBX1 as JSON format. The object consists of three attributes deviceInfo, sensor, and tagConfig.

{

"UUIDAcquisition": "287cddd8-3f95-4449-9350-799d16296c2b",

"JSONVersion": "1.1.0",

"device": {

"sensor": [ ],

"tagConfig": { }

}

}

deviceInfo identifies the device.

{

"UUIDAcquisition": "287cddd8-3f95-4449-9350-799d16296c2b",

"JSONVersion": "1.1.0",

"device": {

"deviceInfo": {

"serialNumber": "000900115652500420303153",

"alias": "STWIN_001",

"partNumber": "STEVAL-STWINBX1",

"URL": "www.st.com\/stwin",

"fwName": "FP-SNS-DATALOG2",

"fwVersion": "1.2.0",

"dataFileExt": ".dat",

"dataFileFormat": "HSD_1.0.0",

"nSensor": 9

"sensor": [ ],

"tagConfig": { }

}

}

sensor is an array of attributes to describe all the sensors available on-board. Each sensor has a unique ID a name and sensorDescriptor and sensorStatus.

"sensor": [

{

"id": 0,

"name": "IIS3DWB",

"sensorDescriptor": { },

"sensorStatus": { }

},

{

"id": 1,

"name": "HTS221",

"sensorDescriptor": { },

"sensorStatus": { }

},

:

:

sensorDescriptor describes the main information about the single sensors through the list of its subSensorDescriptor. Each element of the subSensorDescriptor describes the main information about the single sub-sensor, such as the name, data type, sensor type, odr and full scale available, samples per unit of time supported, and measurement unit.

{

"id": 0,

"name": "IIS3DWB",

"sensorDescriptor": {

"subSensorDescriptor": [

{

"id": 0,

"sensorType": "ACC",

"dimensions": 3,

"dimensionsLabel": [

"x",

"y",

"z"

],

"unit": "g",

"dataType": "int16_t",

"FS": [

2,

4,

8,

16

],

"ODR": [

26667

],

"samplesPerTs": {

"min": 0,

"max": 1000,

"dataType": "int16_t"

sensorStatus describes the actual configuration of the related sensor through the list of its subSensorStatus. Each element of subSensorStatus describes the actual configuration of the single subsensor, meaning whether the sensor is active or not, the actual odr(output data rate), fs (full scale), etc.).

"sensorStatus": {

"subSensorStatus": [

{

"ODR": 26667,

"ODRMeasured": 0,

"initialOffset": 0,

"FS": 16,

"sensitivity": 0.000488,

"isActive": false,

"samplesPerTs": 1000,

"usbDataPacketSize": 3000,

"sdWriteBufferSize": 43520,

"wifiDataPacketSize": 0,

"comChannelNumber": -1,

"ucfLoaded": false

}

]

}

For us the only important configurations are isActive, odr, and fs. For details readers are invited to refer to the user manual of FP-SNS-DATALOG2 package.

- FP-AI-MONITOR2: Multi-sensor AI data monitoring framework on wireless industrial node, function pack for STM32Cube

- Quick Start Guide: QSG for FP-AI-MONITOR2

- How to perform anomaly detection using FP-AI-MONITOR2 : A step-by-step guide to implement an Anomaly Detection solution for a USB powered fan.

- STEVAL-STWINBX1: STWIN SensorTile Wireless Industrial Node development kit

- STM32CubeMX: STM32Cube initialization code generator

- X-CUBE-AI : expansion pack for STM32CubeMX

- NanoEdge™ AI Studio: NanoEdge™ AI the first Machine Learning Software, specifically developed to entirely run on microcontrollers.

- DBxxxx: Data brief for STEVAL-STWINBX1.

- UM2777: How to use the -**STEVAL-STWINBX1 SensorTile Wireless Industrial Node for condition monitoring and predictive maintenance applications.