This article provides details about the support of quantized model by X-CUBE-AI.

1. Quantization overview

X-CUBE-AI code generator can be used to deploy a quantized model. In this article, “Quantization” refers to 8 bit linear quantization of a NN model (Note that X-CUBE-AI provides also a support for the pre-trained Deep Quantized Neural Network (DQNN) model, see https://wiki.st.com/stm32mcu/wiki/AI:Deep_Quantized_Neural_Network_support Deep Quantized Neural Network (DQNN) support article).

Quantization is an optimization technique[ST 4] to compress a 32-bit floating-point model by reducing the size (smaller storage size and less memory peak usage at runtime), by improving CPU/MCU usage and latency (including power consumption) with a small degradation of accuracy. A quantized model executes some or all of the operations on tensors with integers rather than floating point values. It is an important part of various optimization techniques: topology-oriented, features-map reduction, pruning, weights compression.. which can be applied to address the resource-constrained runtime environment.

There are two classical methods of quantization: post-training quantization (PTQ) and quantization aware training (QAT). First is relatively easier to use, it allows to quantize a pre-trained model with a limited and representative data set (also called calibration data set). Quantization aware training is done during the training process and is often better for model accuracy.

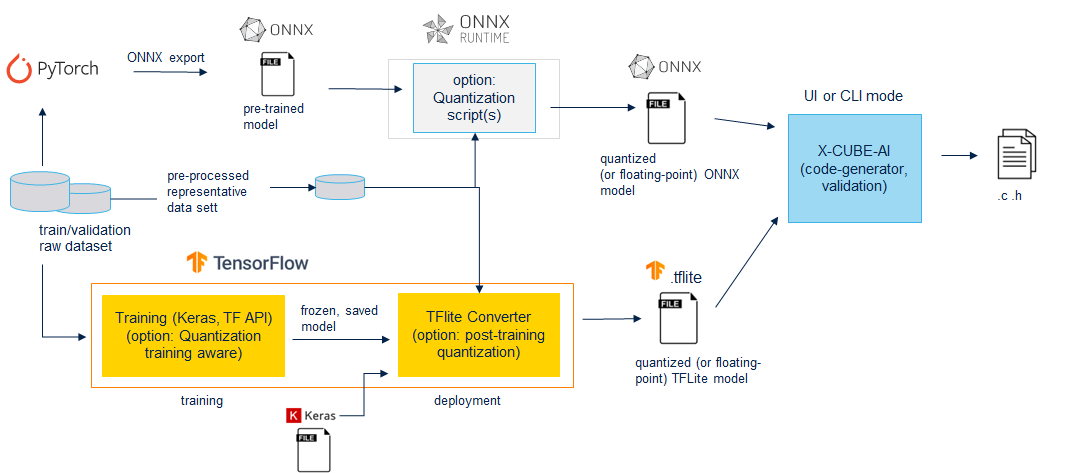

X-CUBE-AI can import different type of quantized model:

- a quantized TensorFlow lite model generated by a post-training or training aware process. The calibration has been performed by the TensorFlow Lite framework, principally through the “TFLite converter” utility exporting a TensorFlow lite file.

- a quantized ONNX model based on the Operator-oriented (QOperator) or the Tensor-oriented (QDQ; Quantize and DeQuantize) format. The first format is dependent of the supported QOperators (see the QLinearXXX operators, [ONNX]), and the second is more generic. The DeQuantizeLinear(QuantizeLinear(tensor)) operators are inserted between the original operators (in float) to simulate the quantization and dequantization process. Both formats can be generated with the ONNX runtime services.

Figure 1: quantization flow

For optimization and performance reasons, the deployed C-kernel (specialized C-implementation of the deployed operators) support only the quantized weights and quantized activations. If this pattern is not infered by the optimizing and rendering passes of the code generator, a floating-point version of the operator is deployed (fallback to 32-b floating point with inserted QUANTIZE/DEQUANTIZE operators, aka fake/simulated quantization). Subsequently:

- the dynamic quantization approach allowing to compute the quantization parameters during the execution is NOT supported to limit the overhead and the cost of the inference in term of computation and memory peak usage

- support of the mixed models is considered

The “analyze”, “validate” and “generate” commands can be used w/o limitations.

stm32ai analyze -m <quantized_model_file>.tflite stm32ai validate -m <quantized_model>.tflite -vi test_data.npz stm32ai analyze -m <quantized_model_file>.onnx

2. Quantized tensors

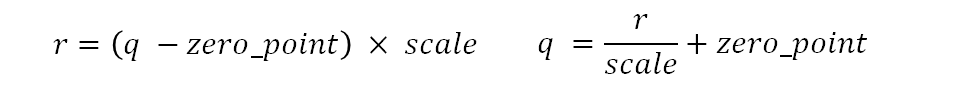

X-CUBE-AI supports the 8b integer-base (int8 or uint8 data type) arithmetic for the quantized tensors which are based on the representative convention used by Google for the quantized models ([2]). Each real number r is represented in function of the quantized value q, a scale factor (arbitrary positive real number) and a zero_point parameter. Quantization scheme is an affine mapping of the integers q to real numbers r. zero_point has the same integer C-type like the q data.

Precision is dependent of a scale factor and the quantized values are linearly distributed around the zero_point value. In both case, resolution/precision is constant vs floating-point representation.

Figure 2: integer precision

2.1. Per-axis (or per-channel or channelwise) vs per-tensor (or layerwise)

Per-tensor means that the same format (i.e. scale/zero_point) is used for the entire tensor (weights are activations). Per-axis for conv-base operator means there will be one scale and/or zero_point per filter, independent of the others channels, ensuring a better quantization (accuracy point of view) with negligible computation overhead.

Per-axis approach is currently the standard method used for quantized convolutionnal kernels (weight tensors). Activation tensors are always in per-tensor. Design of the optimzed C-kernels is mainly drived by this approach.

2.2. Symmetric vs Asymmetric

Asymmetric means that the tensor can have zero_point anywhere within the signed 8b range [-128, 127] or unsigned 8b range [0, 255]. Symmetric means that the tensor is forced to have zero_point equal to zero. By enforcing zero_point to zero, some kernel optimization implementations are possible to limit the cost of the operations (off-line pre-calculation..). By nature, the activations are asymmetric, consequently symmetric format for the activations is not supported. For the weights/bias, asymmetric and symmetric format are supported.

2.3. Signed integer vs Unsigned integer - supported schemes

Signed or unsigned integer type can be defined for the weights and/or activations. However all requested kernels are not implemented or relevant to support the different optimized combinations related to the symmetric and asymmetric format. This imply that only the following integer schemes or combinations are supported:

| scheme | weights | activations |

|---|---|---|

| ua/ua | unsigned and asymmetric | unsigned and asymmetric |

| ss/sa | signed and symmetric | signed and asymmetric |

| ss/ua | signed and symmetric | unsigned and asymmetric |

3. Quantize Tensorflow models

X-CUBE-AI is able to import the quantization training-aware and post-training quantized TensorFlow lite models. Post-training quantized models (TensorFlow v1.15 or v2.x) are based on the “ss/sa” and per-channel scheme. Activations are asymmetric and signed (int8), weights/bias are symmetric and signed (int8). Previous quantized training-aware models are based on the “ua/ua” scheme, now the “ss/sa” and per-channel scheme is also the privileged scheme to address efficiently the Coral Edge TPUs or TensorFlow Lite for Microcontrollers runtime.

3.1. Supported/recommended methods

- Post-training quantization: https://www.tensorflow.org/lite/performance/post_training_quantization

- Quantization aware training: https://www.tensorflow.org/model_optimization/guide/quantization/training

| method/option | supported/recommended |

|---|---|

| Dynamic range quantization | not supported, only static approach is considered |

| Full integer quantization | supported, representative dataset should be used for the calibration |

| Integer with float fallback (using default float input/output) | supported, mixed model, representative dataset should be used for the calibration |

| Integer only | recommended, representative dataset should be used for the calibration |

| Weight Only Quantization | not supported |

| Input/output data type | 'uint8', 'int8' and 'float32' can be used |

| Float16 quantization | not supported, only float32 model are supported |

| Per Channel | default behavior, can be not modified |

| Activation type | 'int8', “ss/sa” scheme |

| Weight type | 'int8', “ss/sa” scheme |

3.2. “Integer only” method

Following code snippet illustrates the recommended TFLiteConverter options to enforce full integer scheme (post-training quantization for all operators including the input/output tensors).

def representative_dataset_gen():

data = tload(...)

for _ in range(num_calibration_steps):

# Get sample input data as a numpy array in a method of your choosing.

input = get_sample(data)

yield [input]

converter = tf.lite.TFLiteConverter.from_saved_model(<saved_model_dir>)

# converter = tf.lite.TFLiteConverter.from_keras_model(model)

converter.representative_dataset = representative_dataset_gen

# This enables quantization

converter.optimizations = [tf.lite.Optimize.DEFAULT]

# This ensures that if any ops can't be quantized, the converter throws an error

converter.target_spec.supported_ops = [tf.lite.OpsSet.TFLITE_BUILTINS_INT8]

# These set the input and output tensors to int8

converter.inference_input_type = tf.int8 # or tf.uint8

converter.inference_output_type = tf.int8 # or tf.uint8

quant_model = converter.convert()

# Save the quantized file

with open(<tflite_quant_model_path>, "wb") as f:

f.write(quant_model)

...

3.3. “Full integer quantization” method

As the mixed models are supported by X-CUBE-AI, the full integer quantization method can be used. However, it is also preferable to enable the TensorFlow Lite ops (TFLITE_BUILTINS) as only a limited number of TensorFlow operators are supported.

...

converter = tf.lite.TFLiteConverter.from_saved_model(<saved_model_dir>)

# converter = tf.lite.TFLiteConverter.from_keras_model(model)

converter.representative_dataset = representative_dataset_gen

# This enables quantization

converter.optimizations = [tf.lite.Optimize.DEFAULT]

# This optional option, ensures that TF Lite operators are used.

converter.target_spec.supported_ops = [tf.lite.OpsSet.TFLITE_BUILTINS]

# These set the input and output tensors to float32

converter.inference_input_type = tf.float32 # or tf.uint8, tf.int8

converter.inference_output_type = tf.float32 # or tf.uint8, tf.int8

quant_model = converter.convert()

...

3.4. Warning - Usage of the tf.lite.Optimize.DEFAULT

This option allows to enable the quantization process, however, to be sure to have the quantized weights and quantized activations, representative_dataset attribute should be always used. Else only the weights/params will be quantized allowing to reduce the size of the generated file by ~4. But in this case, as for the deprecated option (OPTIMIZE_FOR_SIZE), the tflite file will be deployed as a fully floating-point c-model with the weights in float (dequantized values).

Note that this option should be not used to convert a TensorFlow model.

4. Quantize ONNX models

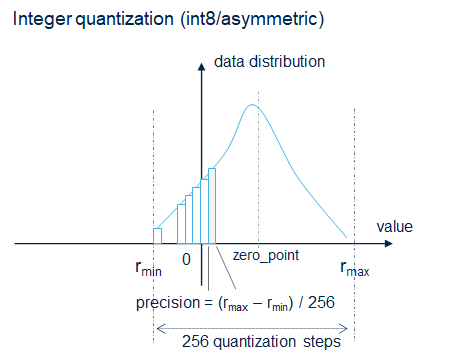

To quantize a ONNX model, it is recommended to use the services from the ONNX runtime module. The Tensor-oriented (QDQ; Quantize and DeQuantize) format is privileged, as illustrated by the following figure, the additional DeQuantizeLinear(QuantizeLinear(tensor)) between the original operators will be automatically detected and removed to deploy the associated optimized C-kernels.

Figure 3: resnet 50 example

Comments about the illustrated example:

- merging of the Batch Normalization operators is automatically done by the quantize function. Model can be also previously optimized for inference before to quantize the model (ONNX Simplifier can be also used)

python -m onnxruntime.quantization.preprocess --input model.onnx --output model-infer.onnx

- as the deployed C-kernels are channel last (“hwc” data format) and to respect the original input data representation, a "Transpose" operator has been added. Note that this operation is done by SW and the cost can be not negligible. To avoid this situation, the option ‘–no-onnx-io-transpose’ can be used, allowing to process directly w/o transpose operation.

- by default, the IO data type (float32) of the original ONNX model is not conserved if they are quantized by the first QuantizeLinear operator (respectively dequantized by the last DeQuantizeLinear operator). As illustrated in the following figure, to keep the IO in float, the option '--onnx-keep-io-as-model' can be used.

5. STMicroelectronics references

See also: