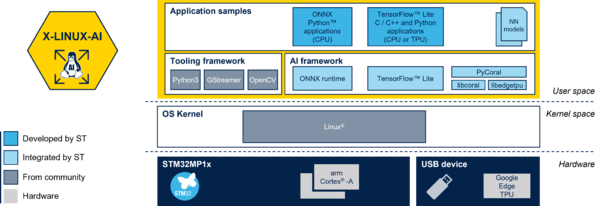

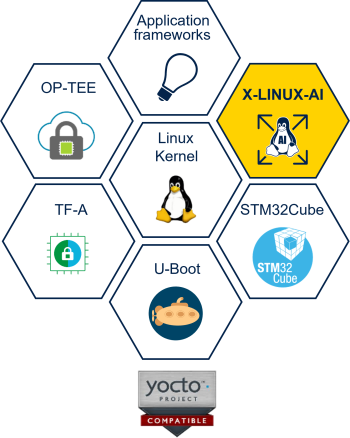

X-LINUX-AI is an STM32 MPU OpenSTLinux Expansion Package that targets artificial intelligence for STM32MP1 Series devices.

It contains Linux AI frameworks, as well as application examples to get started with some basic use cases such as computer vision (CV).

It is composed of an OpenEmbedded meta layer, named meta-st-stm32mpu-ai, to be added on top of the STM32MP1 Distribution Package.

It brings a complete and coherent easy-to-build / install environment to take advantage of AI on the STM32MP1 Series.

1. Version v2.0.0[edit source]

1.1. Contents[edit source]

- TensorFlow Lite[1] 2.2.0

- Google Edge TPU[2] accelerator native support

- armNN[3] 20.05

- OpenCV[4] 4.1.x

- Python[5] 3.8.x (enabling Pillow module)

- Support STM32MP15xF[6] devices operating at up to 800MHz

- Python and C++ application samples

- Image classification using TensorFlow Lite based on MobileNet v1 model

- Object detection using TensorFlow Lite based on COCO SSD MobileNet v1 model

- Image classification using Google Edge TPU based on MobileNet v1 model

- Object detection using Google Edge TPU based on COCO SSD MobileNet v1 model

- Image classification using armNN TensorFlow Lite parser based on MobileNet v1 model

- Object detection using armNN TensorFlow Lite parser based on COCO SSD MobileNet v1 model

1.2. Validated hardware[edit source]

As any software expansion package, the X-LINUX-AI is supported on all STM32MP1 Series but it has been validated on the following boards:

1.3. Software structure[edit source]

1.4. Install from the OpenSTLinux AI package repository[edit source]

All the generated X-LINUX-AI packages are available from the OpenSTLinux AI package repository service hosted at the non-browsable URL http://packages.openstlinux.st.com/AI.

This repository contains AI packages that could be simply installed using apt-* utilities, which are the same utilities used on a Debian system:

- the main group contains the selection of AI packages whose installation is automatically tested by STMicroelectronics

- the updates group is reserved for future use like package revision update.

1.4.1. Prerequisites[edit source]

- Flash the Starter Package on your SDCard

- Your board has an internet connection either through the network cable or through a WiFi connection.

1.4.2. Configure the AI OpenSTLinux package repository[edit source]

Once the board is booted, execute the following command in the console in order to configure the AI OpenSTLinux package repository:

echo "deb http://extra.packages.openstlinux.st.com/AI/2.0 dunfell main updates" >> /etc/apt/sources.list.d/extra.packages.openstlinux.st.list echo "DPkg::Pre-Invoke {\"echo 'https://wiki.st.com/stm32mpu/wiki/X-LINUX-AI_licenses' ; echo ; \";};" >> /etc/apt/apt.conf.d/10-st-disclaimer-extra-AI echo "APT::Update::Pre-Invoke {\"echo 'https://wiki.st.com/stm32mpu/wiki/X-LINUX-AI_licenses' ; echo ;\";};" >> /etc/apt/apt.conf.d/10-st-disclaimer-extra-AI

1.4.3. Install AI packages[edit source]

apt-get update

| Package Name | Command | Description |

|---|---|---|

| arm-compute-library | apt-get install arm-compute-library |

Arm Compute Library (ACL) |

| arm-compute-library-tools | apt-get install arm-compute-library-tools |

Arm Compute Library utilities (graph examples and benchmarks) |

| armnn | apt-get install armnn |

arm Neural Network SDK (armNN) |

| armnn-tensorflow-lite | apt-get install armnn-tensorflow-lite |

armNN TensorFlow Lite parser |

| armnn-tensorflow-lite-examples | apt-get install armnn-tensorflow-lite-examples |

armNN TensorFlow Lite examples |

| armnn-tfl-benchmark | apt-get install armnn-tfl-benchmark |

armNN benchmark application for TensorFlow Lite models |

| armnn-tfl-cv-apps-image-classification-c++ | apt-get install armnn-tfl-cv-apps-image-classification-c++ |

armNN TensorFlow Lite C++ image classification example |

| armnn-tfl-cv-apps-object-detection-c++ | apt-get install armnn-tfl-cv-apps-object-detection-c++ |

armNN TensorFlow Lite C++ object detection example |

| armnn-tools | apt-get install armnn-tools |

armNN utilitites such as unitary tests |

| libflatbuffers1 | apt-get install libflatbuffers1 |

Memory Efficient Serialization Library |

| python3-pillow | apt-get install python3-pillow |

Python Imaging Library |

| python3-tensorflow-lite | apt-get install python3-tensorflow-lite |

Python TensorFlow Lite inference engine |

| tensorflow-lite-tools | apt-get install tensorflow-lite-tools |

Tensorflow Lite utilities |

| tflite-cv-apps-image-classification-c++ | apt-get install tflite-cv-apps-image-classification-c++ |

TensorFlow Lite C++ image classification example |

| tflite-cv-apps-image-classification-python | apt-get install tflite-cv-apps-image-classification-python |

TensorFlow Lite Python image classification example |

| tflite-cv-apps-object-detection-c++ | apt-get install tflite-cv-apps-object-detection-c++ |

TensorFlow Lite C++ object detection example |

| tflite-cv-apps-object-detection-python | apt-get install tflite-cv-apps-object-detection-python |

TensorFlow Lite Python object detection example |

| tflite-models-coco-ssd-mobilenetv1 | apt-get install tflite-models-coco-ssd-mobilenetv1 |

TensorFlow Lite COCO SSD Mobilenetv1 model |

| tflite-models-mobilenetv1 | apt-get install tflite-models-mobilenetv1 |

TensorFlow Lite Mobilenetv1 model |

1.5. Re-generate X-LINUX-AI OpenSTLinux distribution[edit source]

With the following procedure, you can re-generate the complete distribution enabling the X-LINUX-AI expansion package.

This procedure is mandatory if you want to update by yourself some frameworks or if you want to modify the application samples.

To know more, please expand the contents...

1.5.1. Download the STM32MP1 Distribution Package v2.0.0[edit source]

Install the STM32MP1 Distribution Package v1.2.0, but do not initialize the OpenEmbedded environment (sourcing the envsetup.sh).

1.5.1.1. Clone following git repositories into <Distribution Package installation directory>/layers/meta-st[edit source]

The software package is provided AS IS, and by downloading it, you agree to be bound to the terms of the software license agreement (SLA). The detailed content licenses can be found here.

cd <Distribution Package installation directory>/layers/meta-st git clone https://github.com/STMicroelectronics/meta-st-stm32mpu-ai.git -b v2.0.0_dunfell

1.5.2. Set up the build environment[edit source]

cd ../.. DISTRO=openstlinux-weston MACHINE=stm32mp1 source layers/meta-st/scripts/envsetup.sh

1.5.3. Add the new layers to the build system[edit source]

bitbake-layers add-layer ../layers/meta-st/meta-st-stm32mpu-ai

1.5.4. Build the image[edit source]

bitbake st-image-ai

1.5.5. Flash the built image[edit source]

Follow this link to know how to flash the built image.

2. How to use the X-LINUX-AI Expansion Package[edit source]

2.1. Material needed[edit source]

To use the X-LINUX-AI-CV OpenSTLinux Expansion Package, choose one of the following materials:

- STM32MP157C-DK2[7] + an UVC USB WebCam

- STM32MP157C-EV1[8] with the built in camera

- STM32MP157A-EV1[9] with the built in camera

Optional:

- Google Edge TPU[2] accelerator

2.2. Launch an AI application sample[edit source]

Each application are described within a dedicated article that explain: the purpose, how to use, how to install and how to rebuild.

Application samples are available in the X-LINUX-AI application samples zoo page.

2.3. Enjoy running your own NN models[edit source]

The above examples provide application samples to demonstrate how to execute NN Tensforflow Lite models easily on the STM32MP1.

You are free to update the C/C++ application or Python scripts for your own purposes, using your own NN Tensorflow Lite models.

Source code location are provided in application sample pages.