1. Install from the OpenSTLinux AI package repository[edit | edit source]

All the generated X-LINUX-AI packages are available from the OpenSTLinux AI package repository service hosted at the non-browsable URL http://extra.packages.openstlinux.st.com/AI.

This repository contains AI packages that can be simply installed using apt-* utilities, which the same as those used on a Debian system:

- the main group contains the selection of AI packages whose installation is automatically tested by STMicroelectronics

- the updates group is reserved for future uses such as package revision update.

You can install them individually or by package group.

1.1. Prerequisites[edit | edit source]

- Flash the Starter Package on your SDCard

- For OpenSTLinux ecosystem release v5.0.0

:

:

- For OpenSTLinux ecosystem release v5.0.0

- Your board has an internet connection either through the network cable or through a WiFi connection.

1.2. Configure the AI OpenSTLinux package repository[edit | edit source]

Once the board is booted, execute the following command in the console in order to configure the AI OpenSTLinux package repository:

For ecosystem release v5.0.0  :

:

- Move to the apt archives directory :

cd /var/cache/apt/archives

- Retrieve the specific package apt-openstlinux-ai_1.0_armhf.deb:

wget http://extra.packages.openstlinux.st.com/AI/5.0/pool/config/a/apt-openstlinux-ai/apt-openstlinux-ai_1.0_armhf.deb

- Install this package:

apt-get install ./apt-openstlinux-ai_1.0_armhf.deb

- Then synchronize the AI OpenSTLinux package repository.

apt-get update

1.3. Install AI packages[edit | edit source]

1.3.1. Install all X-LINUX-AI packages[edit | edit source]

| Command | Description |

|---|---|

apt-get install packagegroup-x-linux-ai |

Install all the X-LINUX-AI packages (TensorFlow™ Lite, Edge TPU™, application samples and tools) |

[edit | edit source]

| Command | Description |

|---|---|

apt-get install packagegroup-x-linux-ai-tflite |

Install X-LINUX-AI packages related to TensorFlow™ Lite framework (including application samples) |

apt-get install packagegroup-x-linux-ai-tflite-edgetpu |

Install X-LINUX-AI packages related to the Coral Edge TPU™ framework (including application samples) |

apt-get install packagegroup-x-linux-ai-onnxruntime |

Install X-LINUX-AI packages related to ONNX Runtime™ (including application samples) |

1.3.3. Install individual packages[edit | edit source]

| Command | Description |

|---|---|

apt-get install libedgetpu2 |

Install libedgetpu for Coral Edge TPU™ |

apt-get install libcoral2 |

Install libcoral API for Coral Edge TPU™ |

apt-get install libtensorflow-lite-tools |

Install Tensorflow™ Lite utilities |

apt-get install libtensorflow-lite2 |

Install Tensorflow™ Lite runtime |

apt-get install python3-libtensorflow-lite |

Install Python TensorFlow™ Lite inference engine |

apt-get install python3-pycoral |

Install Python PyCoral API for Coral Edge TPU™ |

apt-get install tflite-cv-apps-edgetpu-image-classification-c++ |

Install C++ image classification example using Coral Edge TPU™ TensorFlow™ Lite API |

apt-get install tflite-cv-apps-edgetpu-image-classification-python |

Install Python image classification example using Coral Edge TPU™ TensorFlow™ Lite API |

apt-get install tflite-cv-apps-edgetpu-object-detection-c++ |

Install C++ object detection example using Coral Edge TPU™ TensorFlow™ Lite API |

apt-get install tflite-cv-apps-edgetpu-object-detection-python |

Install Python object detection example using Coral Edge TPU™ TensorFlow™ Lite API |

apt-get install tflite-cv-apps-image-classification-c++ |

Install C++ image classification using TensorFlow™ Lite |

apt-get install tflite-cv-apps-image-classification-python |

Install Python image classification example using TensorFlow™ Lite |

apt-get install tflite-cv-apps-object-detection-c++ |

Install C++ object detection example using TensorFlow™ Lite |

apt-get install tflite-cv-apps-object-detection-python |

Install Python object detection example using TensorFlow™ Lite |

apt-get install tflite-edgetpu-benchmark |

Install benchmark application for Coral Edge TPU™ models |

apt-get install tflite-models-coco-ssd-mobilenetv1 |

Install TensorFlow™ Lite COCO SSD Mobilenetv1 model |

apt-get install tflite-models-coco-ssd-mobilenetv1-edgetpu |

Install TensorFlow™ Lite COCO SSD Mobilenetv1 model for Coral Edge TPU™ |

apt-get install tflite-models-mobilenetv1 |

Install TensorFlow™ Lite Mobilenetv1 model |

apt-get install tflite-models-mobilenetv1-edgetpu |

Install TensorFlow™ Lite Mobilenetv1 model for Coral Edge TPU™ |

apt-get install onnxruntime |

Install ONNX Runtime™ |

apt-get install onnxruntime-tools |

Install ONNX Runtime™ utilities |

apt-get install python3-onnxruntime |

Install ONNX Runtime™ python API |

apt-get install onnx-models-coco-ssd-mobilenetv1 |

Install ONNX Runtime™ COCO SSD Mobilenetv1 model |

apt-get install onnx-models-mobilenet |

Install ONNX Runtime™ Mobilenetv1 model |

apt-get install onnx-cv-apps-image-classification-python |

Install Python image classification example using ONNX Runtime™ |

apt-get install onnx-cv-apps-object-detection-c++ |

Install C++ object detection example using ONNX Runtime™ |

apt-get install onnx-cv-apps-object-detection-python |

Install Python object detection example using ONNX Runtime™ |

2. How to use the X-LINUX-AI Expansion Package[edit | edit source]

2.1. Material needed[edit | edit source]

To use the X-LINUX-AI OpenSTLinux Expansion Package, choose one of the following materials:

- STM32MP157F-DK2 Discovery kit

+ an UVC USB WebCam

+ an UVC USB WebCam - STM32MP157F-EV1 Evaluation board

with the built in camera module : MB1379 (OmniVision OV5640 parallel camera)

with the built in camera module : MB1379 (OmniVision OV5640 parallel camera) - STM32MP135F-DK Discovery kit

with the built in camera module : MB1897 (GalaxyCore 2145 parallel camera)

with the built in camera module : MB1897 (GalaxyCore 2145 parallel camera)

Optional:

- Coral USB Edge TPU™[1] accelerator

2.2. Boot the OpenSTlinux Starter Package[edit | edit source]

At the end of the boot sequence, the demo launcher application appears on the screen.

2.3. Install the X-LINUX-AI[edit | edit source]

After having configured the AI OpenSTLinux package you can install the X-LINUX-AI components.

apt-get install packagegroup-x-linux-ai

And restart the demo launcher:

systemctl restart weston-graphical-session.service

Check that X-LINUX-AI is properly installed:

x-linux-ai -v

X-LINUX-AI version: v5.0.0

2.4. Launch an AI application sample[edit | edit source]

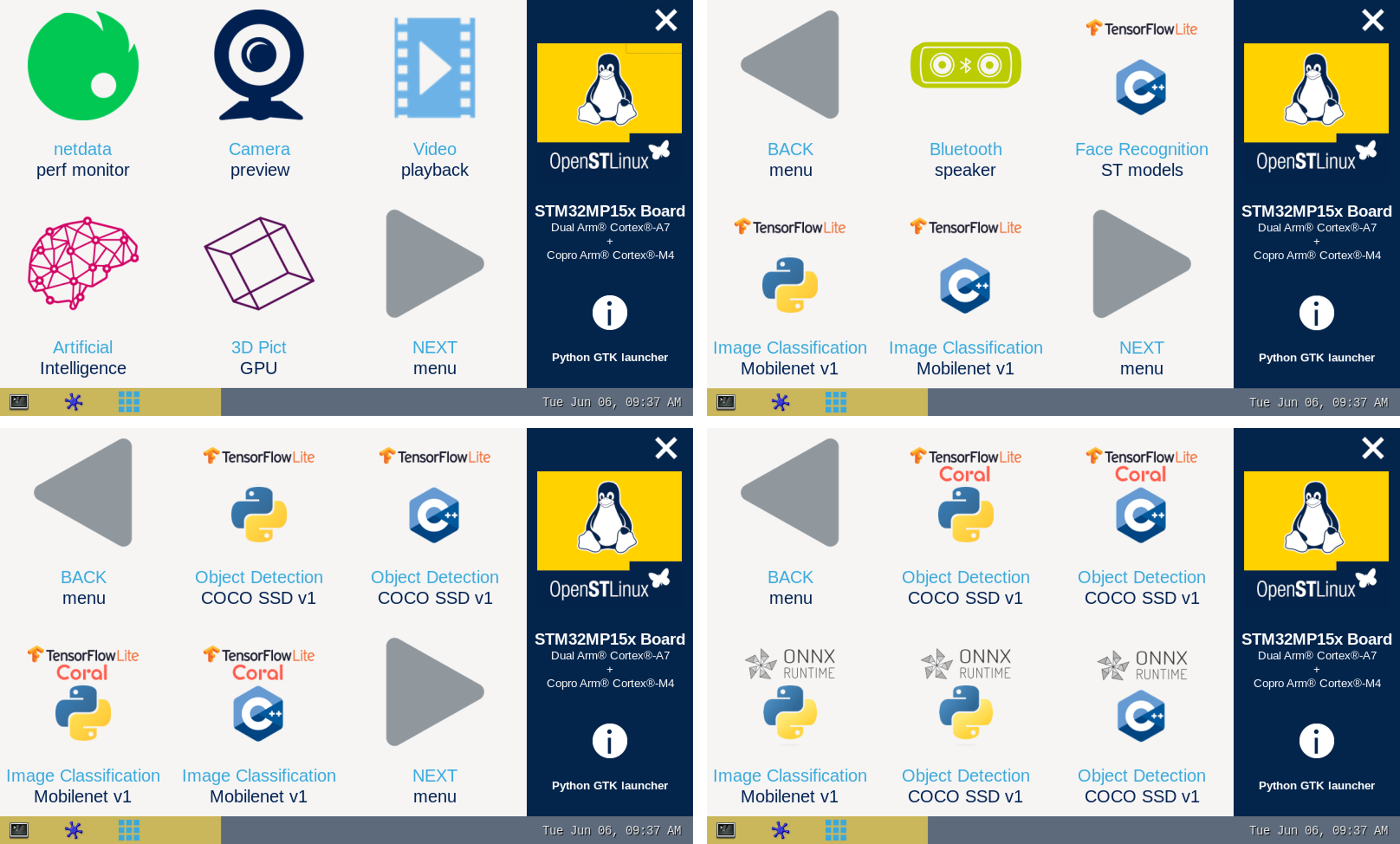

Once the demo launcher is restarted, notice that it is slightly different because new AI application samples have been installed.

The demo launcher has the following appearance, and you can navigate into the different screens by using the NEXT or BACK buttons.

The demo launcher now contain AI application samples that are described within dedicated article available in the X-LINUX-AI application samples zoo page.

2.5. Enjoy running your own NN models[edit | edit source]

The above examples provide application samples to demonstrate how to execute models easily on the STM32MP1.

You are free to update the C/C++ application or Python scripts for your own purposes, using your own NN models.

Source code locations are provided in application sample pages.

2.6. References[edit | edit source]