| Delivery for this distribution is being prepared |

The FP-AI-NANOEDG1 function pack helps users to jump-start easily the development and implementation of condition monitoring applications powered by NanoEdgeTM AI Studio solution from Cartesiam.

NanoEdgeTM AI Studio simplifies the creation of autonomous machine learning libraries with the possibility of running not just inference but also training on the edge. It facilitates the integration of predictive maintenance capabilities as well as the security and detection with sensor patterns self-learning and self-understanding, exempting users from special skills in mathematics, machine learning, data science, or creation and training of the neural networks.

FP-AI-NANOEDG1 covers the entire design of the Machine Learning cycle from the data set acquisition to the integration of NanoEdge™ AI Studio generated libraries on a physical node. It runs the inference in real-time on an STM32L4R9ZI ultra-low-power microcontroller (Arm® Cortex®-M4 at 120 MHz with 2 Mbytes of Flash memory and 640 Kbytes of SRAM), taking physical sensor data as input. The NanoEdge™ library generation itself is out of the scope of this function pack and must be generated using NanoEdgeTM AI Studio.

FP-AI-NANOEDG1 implements a wired interactive command-line interface (CLI) to configure the node, record data, and manage learning and detection phases. However, all these operations can also be performed in a standalone battery-operated mode through the user button, without having the console. In addition to this V2.0 of FP-AI-NANOEDG1 comes with a mode that is completely independent and can be simply configured through some configuration files. This mode provides the user with the capability to run different execution phases automatically when the sensor mode is powered or a reset is performed.

This user manual describes the content of the FP-AI-NANOEDG1 function pack and details the different steps to build such applications on the STEVAL-STWINKT1B.

1. General information

The FP-AI-NANOEDG1 function pack runs on STM32L4+ microcontrollers based on Arm® cores.

1.1. Feature overview

- Complete firmware to program an STM32L4+ sensor node for condition monitoring and predictive maintenance applications

- Stub for replacement with a Cartesiam Machine Learning library generated using the NanoEdgeTM AI Studio for the desired AI application

- Configuration and acquisition of STMicroelectronics 3-axis digital vibration sensor (IIS3DWB), and 3D digital accelerometer and 3D digital gyroscope iNEMO inertial measurement unit (ISM330DHCX) with machine learning core

- Data logging on a microSDTM card

- Embedded file system utilities

- Configurable autonomous mode controlled by user button

- Interactive command-line interface (CLI) for

- - Node and sensor configuration

- - Data logging

- - Learning and detection phase management of the NanoEdgTM library

- Easy portability across STM32 microcontrollers by means of the STM32Cube ecosystem

- Free and user-friendly license terms

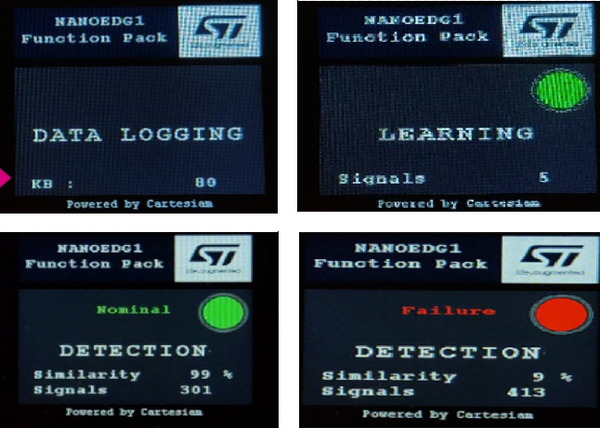

1.2. Software architecture

The STM32Cube function packs leverage the modularity and interoperability of STM32 Nucleo boards and expansion boards running STM32Cube MCU Packages and Expansion Packages to create functional examples representing some of the most common use cases in certain applications. The function packs are designed to fully exploit the underlying STM32 ODE hardware and software components to best satisfy the final user application requirements.

Function packs may include additional libraries and frameworks, not present in the original STM32Cube Expansion Packages, which enable new functions and create more targeted and usable systems for developers.

STM32Cube includes:

- A set of user-friendly software development tools to cover project development from the conception to the realization, among which are:

- STM32CubeMX, a graphical software configuration tool that allows the automatic generation of C initialization code using graphical wizards

- STM32CubeIDE, an all-in-one development tool with peripheral configuration, code generation, code compilation, and debug features

- STM32CubeProgrammer (STM32CubeProg), a programming tool available in graphical and commandline versions

- STM32CubeMonitor (STM32CubeMonitor, STM32CubeMonPwr, STM32CubeMonRF, STM32CubeMonUCPD) powerful monitoring tools to fine-tune the behavior and performance of STM32 applications in real-time

- STM32Cube MCU & MPU Packages, comprehensive embedded-software platforms specific to each microcontroller and microprocessor series (such as STM32CubeL4 for the STM32L4+ Series), which include:

- STM32Cube hardware abstraction layer (HAL), ensuring maximized portability across the STM32 portfolio

- STM32Cube low-layer APIs, ensuring the best performance and footprints with a high degree of user control over the HW

- A consistent set of middleware components such as RTOS, USB Device, USB PD, FAT file system, Touch library, Trusted Firmware (TF-M), mbedTLS, and mbed-crypto

- All embedded software utilities with full sets of peripheral and applicative examples

- STM32Cube Expansion Packages, which contain embedded software components that complement the functionalities of the STM32Cube MCU & MPU Packages with:

- Middleware extensions and applicative layers

- Examples running on some specific STMicroelectronics development boards

To access and use the sensor expansion board, the application software uses:

- STM32Cube hardware abstraction layer (HAL): provides a simple, generic, and multi-instance set of generic and extension APIs (application programming interfaces) to interact with the upper layer applications, libraries, and stacks. It is directly based on a generic architecture and allows the layers that are built on it, such as the middleware layer, to implement their functions without requiring the specific hardware configuration for a given microcontroller unit (MCU). This structure improves library code reusability and guarantees easy portability across other devices.

- Board support package (BSP) layer: supports the peripherals on the STM32 Nucleo boards.

The top-level architecture of the FP-AI-NANOEDG1 function pack is shown in the following figure.

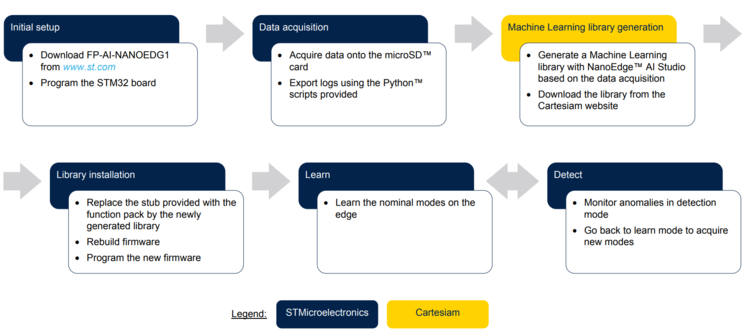

1.3. Development flow

FP-AI-NANOEDG1 does not embed any Machine Learning technology. Instead, it provides all the means to help the generation of the Machine Learning algorithm by NanoEdge™ AI Studio and embed it easily in the STEVAL-STWINKT1B evaluation kit in a practical way. The steps of the suggested flow are presented in the figure below.

1.4. Folder structure

The following folders are included in the function pack:

- Documentation: contains a compiled

htmlfile generated from the source code, which details the software components and APIs. - Drivers: contains the HAL drivers, the board-specific drivers for each supported board or hardware platform (including the on-board components), and the CMSIS vendor-independent hardware abstraction layer for the Cortex®-M processors.

- Middlewares: contains libraries and protocols for USB Device library, generic FAT file system module (FatFS), FreeRTOS™ real-time OS, Parson a json browser and the NanoEdge™ AI library stub.

- Projects: contains a sample application used for collecting data from motion sensors onto the microSD™ card and manage the learning and detection phases of Cartesiam Machine Learning solution provided for the STWIN evalkt 1B platforms through the STM32CubeIDE development environment.

- Utilities: contains scripts and samples for exporting the datalogs generated by the function pack datalogger to NanoEdge™ AI Studio compliant .csv files, which are required to generate the Machine Learning libraries from NanoEdge™ AI Studio. It also contains an STM32CubeMX companion project that can help to port or configure the function pack.

1.5. Terms and definitions

| Acronym | Definition. |

|---|---|

| API | Application programming interface |

| BSP | Board support package |

| CLI | Command line interface |

| FP | Function pack |

| GUI | Graphical user interface |

| HAL | Hardware abstraction layer |

| LCD | Liquid-crystal display |

| MCU | Microcontroller unit |

| ML | Machine learning |

| ODE | Open development environment |

1.6. References

| References | Description | Source |

|---|---|---|

| [1] | Cartesiam website | cartesiam.ai |

| [2] | STEVAL-STWINKT1B | add link to STWIN |

1.7. Prerequisites

The following IDEs for STM32 must be installed:

- STMicroelectronics - STM32CubeIDE version 1.4.2 or later

FP-AI-NANOEDG1 can be deployed on the following operating systems:

- Windows® 10

- Ubuntu® 18.4 and Ubuntu® 16.4 (or derived)

- macOS® (x64)

Note: Ubuntu® is a registered trademark of Canonical Ltd. macOS® is a trademark of Apple Inc. registered in the U.S. and other countries.

1.8. License

FP-AI-NANOEDG1 is delivered under the Mix Ultimate Liberty+OSS+3rd-party V1 software license agreement (SLA0048).

The software components provided in this package come with different license schemes as shown in the table below.

| Software component | Owner | License |

|---|---|---|

| Cortex®-M CMSIS | Arm Limited | Apache License 2.0 |

| FreeRTOS™ | Amazon.com, Inc. or its affiiates | MIT |

| STM32L4xxx_HAL_Driver | STMicroelectronics | BSD-3-Clause |

| Board support package (BSP) | STMicroelectronics | BSD-3-Clause |

| STM32L4xx CMSIS | Arm Limited - STMicroelectronics | Apache License 2.0 |

| FatFS | ChaN | BSD-3-Clause |

| Parson | Krzysztof Gabis | MIT |

| Applications | STMicroelectronics | Ultimate Liberty (source release) |

| Python Scripts | STMicroelectronics | BSD-3-Clause |

| Dataset | STMicroelectronics | Ultimate Liberty |

2. Hardware and firmware setup

2.1. Presentation of the Target STM32 board

The STWIN SensorTile wireless industrial node (STEVAL-STWINKT1B) is a development kit and reference design that simplifies prototyping and testing of advanced industrial IoT applications such as condition monitoring and predictive maintenance. It is powered with Ultra-low-power ARM Cortex-M4 MCU at 120 MHz with FPU, 2048 kbytes Flash memory (STM32L4R9). Other than this, STWIN SensorTile is equipped with microSDTM card slot for standalone data logging applications, a wireless BLE4.2 (on-board) and Wi-Fi (with STEVAL-STWINWFV1 expansion board), and wired RS485 and USB OTG connectivity, as well as Brand protection secure solution with STSAFE-A110 (footprint). In terms of sensor STWIN SensorTile is equipped with a wide range of industrial IoT sensors including:

- an ultra-wide bandwidth (up to 6 kHz), low-noise, 3-axis digital vibration sensor (IIS3DWB)

- a 6-axis digital accelerometer and gyroscope iNEMO inertial measurement unit (IMU) with machine learning (ISM330DHCX)

- an ultra-low-power high-performance MEMS motion sensor (IIS2DH)

- an ultra-low-power 3-axis magnetometer (IIS2MDC)

- a digital absolute pressure sensor (LPS22HH)

- a relative humidity and temperature sensor (HTS221)

- a low-voltage digital local temperature sensor (STTS751)

- an industrial-grade digital MEMS microphone (IMP34DT05), and

- a wideband analog MEMS microphone (MP23ABS1)

Other attractive features include:

- – a Li-Po battery 480 mAh to enable standalone working mode, and

- – STLINK-V3MINI debugger with programming cable to flash the board, as well as,

- – a Plastic box for ease of placing and planting the SensorTile on the machines for condition monitoring. For further details, the users are advised to visit this link.

2.2. Connect hardware

To start using FP-AI-NANOEDG1, the user needs:

- A personal computer with one of the supported operating systems

- One USB cable to connect the PC to the Micro-B USB connector on the board

- An STEVAL-STWINKT1B board

- One microSDTM card formatted as FAT32.

Follow the next two steps to connect hardware:

- Connect the STM32 sensor board to the computer through the STLINK with the help of a USB micro cable. This connection will be used to program the STM32 MCU. Note that to control the board later through the CLI pannel the user needs to connect the serial port on the USART of the board.

- Insert the microSDTM card into the dedicated slot to store the data from the datalogger.

Note: Before connecting the sensor board to a Windows® PC via the USB, the user needs to install a driver for the ST-LINK-V3E (not required for Windows 10®). This software can be found on the ST website. The power source for the board can either be a battery for standalone operations or a USB cable connection to the USART interface.

2.3. Program firmware into the STM32 microcontroller

This section explains how to select binary firmware and program it into the STM32 microcontroller. Binary firmware is delivered as part of the FP-AI-NANOEDG1 function pack. It is located in the Projects\STM32L4R9ZI-STWIN\Applications\NanoEdgeConsole\Binary folder. When the STM32 board and PC are connected through the USB cable on the STLINK-V3E connector, the related drive is available on the PC. Drag and drop the chosen firmware into that drive. Wait a few seconds for the firmware file to disappear from the file manager: this indicates that firmware is programmed into the STM32 microcontroller.

2.4. Using the serial console

2.4.1. Set the serial terminal configuration

A serial console is used to interact with the host board (Virtual COM port over USB). With the Windows® operating system, the use of the Tera Term software is recommended. Start Tera Term, select the proper connection (featuring the STMicroelectronics name), and set the parameters:

- Terminal

- [New line]

- [Receive]: CR

- [Transmit]: CR

- [Local echo] selected

- Serial

- [Baud rate]: 115200

- [Data]: 8 bit

- [Parity]: none

- [Stop]: 1 bit

- [Flow control]: none

- [Transmit delay]: 10 ms

2.4.2. Start FP-AI-NANOEDG1 firmware

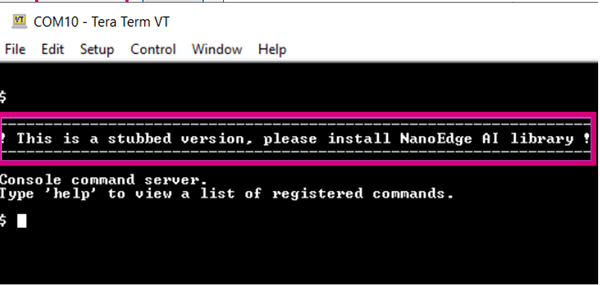

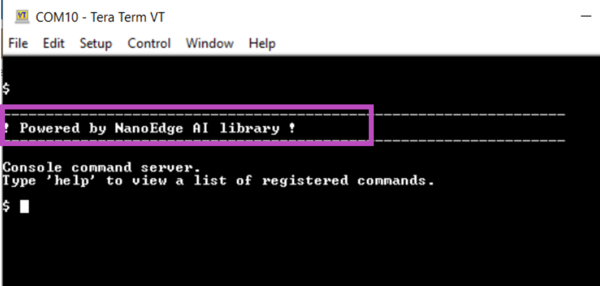

Restart the board by pressing the black reset button. The following welcome screen is displayed on the terminal.

From this point, start entering the commands directly or type help to get the list of available commands along with their usage guidelines. Note: The provided firmware is generated with a stub in place of the actual library. The user must generate the library with the help of the NanoEdge™ AI Studio, replace the stub with it, and rebuild firmware. These steps are detailed in the following sections.

3. Autonomous and button-operated modes

This section provides details of the extended autonomous for FP-AI-NANOEDG1 Function Pack version 2.0. The purpose of the extended mode is to enable the users to operate the FP-AI-NANOEDG1 on STWIN without the need for the CLI console.

In autonomous mode, the sensor node is controlled through the user button instead of the interactive CLI console. The default values for node parameters and settings for the operations during auto-mode are provided in the FW, and different modes (learn, detect, and datalog) can be started and stopped through the user-button on the node. These parameters and configurations can be overridden by the user by providing a configuration JSON file onto the microSDTM card inserted on the node.

Other than this button-operated mode, the autonomous mode comes with a special mode, so-called “auto mode” that can be initiated at device power-up or reset, automatically. In this mode, the user can choose to have a chain of modes/commands to run a list of execution steps. These commands would typically be learning the nominal mode for a given duration and then start detecting anomalies. Other than this, this mode can also be used to start the datalog operations.

The following sections provide brief details on different components of this autonomous mode.

3.1. Interaction with user

As mentioned before, the auto-mode assumes no connectivity (wired or wireless) but needs to remain compatible and consistent with the current definition of the serial console and its Command Line Interface (CLI). The current supporting hardware (STWIN) is fitted with three buttons

- User Button, the only SW usable

- Reset Button, connected to STM32 MCU reset pin

- Power Button connected to power management

and three LEDs

- LED_1 (green), controlled by Software

- LED_2 (orange), controlled by Software

- LED_C (red), controlled by Hardware, indicates charging status

The basic user interaction is to be done through two buttons (user and reset) and two LEDs (green and Orange).

3.1.1. LED Allocation

The user needs to be aware of the current execution phase. in the function pack four execution phases exist:

- idle: the system waits for a specified amount of time.

- datalog: sensor(s) output data are streamed onto an SD card

- learn: all data coming from the sensor(s) are passed to the NanoEdge AI library to train the model.

- detect: all data coming from the sensor(s) are passed to the NanoEdge AI library to detect anomalies.

If a fatal error happened and consequently the FW has stopped, the user needs to be notified, either to debug or to reset the node.

We also need to report the outcome of the detection, whether an anomaly has been detected or not

We need this to code 6 states with the two available LEDs, so we need at least three patterns in addition to the OFF state. Let us define the following four ON patterns for each LED:

| Pattern | Description |

|---|---|

| OFF | the LED is always OFF |

| ON | the LED is always ON |

| BLINK | the LED alternates ON and OFF periods of equal duration (400 ms) |

| BLINK_SHORT | the LED alternates short (200 ms) ON periods with short OFF period (200 ms) |

| BLINK_LONG | the LED alternates long (800 ms) ON periods with long OFF period (800 ms) |

| Pattern | Green | Orange |

|---|---|---|

| OFF | Power OFF | |

| ON | idle | System error |

| BLINK | Data logging | MicroSDTm card missing |

| BLINK_SHORT | Detecting, no anomaly | Anomally detected |

| BLINK_LONG | Learning, status OK | Learning, status OK |

3.1.2. Button Allocation

The user can start one of the four execution phases or a special mode called auto mode:

- idle: the system waits for a specified amount of time.

- datalog: sensor(s) output data are streamed onto an SD card

- learn: all data coming from the sensor(s) are passed to the NanoEdge AI library to train the model.

- detect: all data coming from the sensor(s) are passed to the NanoEdge AI library to detect anomalies.

- auto mode: a succession of predefined execution phases

Two software usable buttons are available for the purpose:

- the user button that is fully under application control

- the reset button that triggers a hardware reset and thus a software reset.

So, we need to detect at least three different ways to press the user button, and we dedicate the reset for starting the auto mode (if configured)

| Button Press | Description |

|---|---|

| SHORT_PRESS | The button is pressed for less than (200 ms) and released |

| LONG_PRESS | The button is pressed for more than (200 ms) and released |

| DOUBLE_PRESS | A succession of two SHORT_PRESS in less than (500 ms) |

| ANY_PRESS | The button is pressed and released (overlaps with the three other modes) |

In the table below a mapping for this different kind of button press patterns is provided:

| Current Phase | Action | Next Phase |

|---|---|---|

| Idle | SHORT_PRESS | detect |

| LONG_PRESS | learn | |

| DOUBLE_PRESS | datalog | |

| RESET | auto-mode if configured or idle | |

| learn, detect or datalog or auto-mode | ANY_PRESS | idle |

| RESET | auto-mode if configured or idle. |

3.2. Configuration

3.2.1. Default Configuration

If no microSDTM card is preset, or If no valid file on the root of the file system of the microSDTM card can be found, then all the FW defaults are applied. The default values are given below:

- All sensors are disabled except vibrometer (id: 0.0) that is configured with the following parameters

- - ODR: 26667 Hz

- - Full Scale: 16 g

- The default configuration for the auto mode is equivalent to the following

execution_config.jsonexample file:

- All sensors are disabled except vibrometer (id: 0.0) that is configured with the following parameters

{

"info": {

"version": "1.0",

"auto_mode": false,

"phases_iteration": 0,

"start_delay_ms": 0,

"execution_plan": [

"idle"

],

"learn": {

"signals": 0,

"timer_ms": 0

},

"detect": {

"signals": 0,

"timer_ms": 0,

"threshold": 90,

"sensitivity": 1.0

},

"datalog": {

"timer_ms": 0

},

"idle": {

"timer_ms": 1000

}

}

}

3.2.2. Configuration Files

Any configuration files, if valid and present at the root of the SDCARD, will override the FW defaults. The configuration is split in two configuration files:

DeviceConfig.json: to configure any sensors and datalog configuration. An example device configuration file is provided for the understanding purpose and can be downloaded fromDeviceConfig.jsonlink.execution_config.json: to configure any execution context as well as the auto mode activation at reset and its definition

Here is the definition of different parameters in the execution_config.json file:

- info: gives the definition of auto-mode as well as each execution context, any field present overrides the FW defaults.

- Version: is the revision of the specification.

- auto_mode: if true, auto mode will start after reset and node initialization.

- execution_plan: is the sequence of maximum ten execution steps

- start_delay_ms: indicates the initial delay in milliseconds applied after reset and before the first execution phase starts when auto mode is selected.

- phases_iteration: gives the number of times the execution_plan is executed, zero indicates an infinite loop

- phase steps execution context settings

- learn:

- - timer_ms: specifies the duration in ms of the execution phase. Zero indicates an infinite time.

- - signals: specifies the number of signals to be analyzed.

- detect:

- - timer_ms: specifies the duration in ms of the execution phase. Zero indicates an infinite time.

- - signals: specifies the number of signals to be analyzed.

- - threshold: specifies a value used to identify a signal as normal or abnormal.

- - sensitivity: specifies the sensitivity value for the NanoEdge AI library.

- datalog:

- - timer_ms: specifies the duration in ms of the execution phase. Zero indicates an infinite time.

- idle:

- - timer_ms: specifies the duration in ms of the execution phase. Zero indicates an infinite time.

- learn:

- info: gives the definition of auto-mode as well as each execution context, any field present overrides the FW defaults.

Below is an example of the execution_config.json file:

{

"info": {

"version": 1,

"auto_mode": true,

"phases_iteration": 0,

"start_delay_ms": 1000,

"execution_plan": [

"learn",

"detect",

"datalog",

"idle"

],

"learn": {

"signals": 0,

"timer_ms": 0

},

"detect": {

"signals": 0,

"timer_ms": 0,

"threshold": 90,

"sensitivity": 1.0

},

"datalog": {

"timer_ms": 0

},

"idle": {

"timer_ms": 0

}

}

}

4. Command line interface

The command-line interface (CLI) is a simple method for the user to control the application by sending command line inputs to be processed on the device.

4.1. Command execution model

The commands are grouped into three main sets:

- (CS1) Generic commands

- This command set allows the user to get the generic information from the device like the firmware version, UID, date, etc. and to start and stop an execution phase.

- (CS2) Predictive maintenance (PdM) commands

- This command set contains commands which are PdM specific. These commands enable users to work with the NanoEdgeTM AI libraries for predictive maintenance.

- (CS3) Sensor configuration commands

- This command set allows the user to configure the supported sensors and to get the current configurations of these sensors.

- (CS4) File system commands

- This command set allows the user to browse through the content of the microSDTM card.

4.2. Execution phases and execution context

The three system execution phases are:

- Datalogging: data coming from the sensor are logged onto the microSDTM card.

- NanoEdgeTM AI learning: data coming from the sensor are passed to the NanoEdgeTM AI library to train the model.

- NanoEdgeTM AI detection: data coming from the sensor are passed to the NanoEdgeTM AI library to detect anomalies.

Each execution phase can be started and stopped with a user command issued through the CLI. An execution context, which is a set of parameters controlling execution, is associated with each execution phase. One single parameter can belong to more than one execution context.

The CLI provides commands to set and get execution context parameters. The execution context cannot be changed while an execution phase is active. If the user attempts to set a parameter belonging to any active execution context, the BUSY status is returned, and the requested parameter is not modified.

4.3. command summary

| Command name | Command string | Note |

|---|---|---|

| CS1 - Generic Commands | ||

| help | help | Lists all registered commands with brief usage guidelines. Including the list of applicable parameters. |

| info | info | Shows firmware details and version. |

| uid | uid | Shows STM32 UID. |

| date_set | date_set <date&time> | Sets date and time of the MCU system. |

| date_get | date_get | Gets date and time of the MCU system. |

| reset | reset | Resets the MCU System. |

| start | start <"datalog", "neai_learn", or "neai_detect" > | Starts an execution phase according to its execution context, i.e. datalog, neai_learn or neai_detect. |

| stop | stop | Stops all running execution phases. |

| CS2 - PdM Specific Commands | ||

| neai_init | neai_init | (Re)initializes the AI model by forgetting any learning. Used in the beginning and / or to create a new NanoEdge AI model. |

| neai_set | neai_set <param> <val> | Sets a PdM specific parameters in an execution context. |

| neai_get | neai_get <param> | Displays the value of the parameters in the execution context. |

| CS3 - Sensor Configuration Commands | ||

| sensor_set | sensor_set <sensorID>.<subsensorID> <param> <val> | Sets the ‘value’ of a ‘parameter’ for a sensor with sensor id provided in ‘id’. |

| sensor_get | sensor_get <sensorID>.<subsensorID> <param> | Gets the ‘value’ of a ‘parameter’ for a sensor with sensor id provided in ‘id’. |

| sensor_info | sensor_info | Lists the type and ID of all supported sensors. |

| CS4 - File System Commands | ||

| ls | ls | Lists the directory contents. |

| cd | cd <directory path> | Changes the current working directory to the provided directory path. |

| pwd | pwd | Prints the name/path of the present working directory. |

| cat | cat <file path> | Display the (text) contents of a file. |

5. Generating a Machine Learning library with NanoEdge™ AI Studio

Using NanoEdge™ AI Studio, the library generation is done in five steps:

- Hardware description

- - Microcontroller type: Arm® Cortex®-M4

- - Maximum amount of RAM: usually a few Kbytes is enough (depends on the frame size)

- - Sensor type: accelerometer

- Contextual data are needed to test the performance of several machine learning models against the provided data, along with a multitude of different (hyper) parameters and signal processing algorithms. This step requires:

- - Regular dataset

- - Abnormal dataset

- Optimize and benchmark: NanoEdgeTM AI Studio uses the provided contextual datasets to create a model, that best separates the regular signals from the abnormal signals with the smallest RAM footprint possible.

- Validate the model: Emulate the behavior of your custom NanoEdge™ AI library directly on the PC as if the library was running on the microcontroller. The generated library can be tested with previously unseen data. The performance must be validated before deploying it on the board.

- Compile and download the library after choosing compilation flags from the Cartesiam website.

Refer to [1] for the full NanoEdge™ AI Studio documentation.

5.1. Generating contextual data

As mentioned previously, the user must provide contextual data for NanoEdge™ AI Studio to work on selecting the best algorithm and optimizing its hyperparameters. NanoEdge™ AI Studio documentation at cartesiam-neaidocs.readthedocs-hosted.com/studio/studio.html gives the following guidelines for the preparation of the contextual data:

- The Regular signals file corresponds to nominal machine behavior that includes all the different regimes, or behaviors, that the user wishes to consider as nominal.

- The Abnormal signals file corresponds to abnormal machine behavior, including some anomalies already encountered by the user, or that the user suspects could happen.

FP-AI-NANOEDG1 comes equipped with the data logging capabilities for embedded sensors on the STM32 sensor board.

To proceed with data acquisition:

- Make sure that a microSDTM card is inserted, and the USB cable is plugged in.

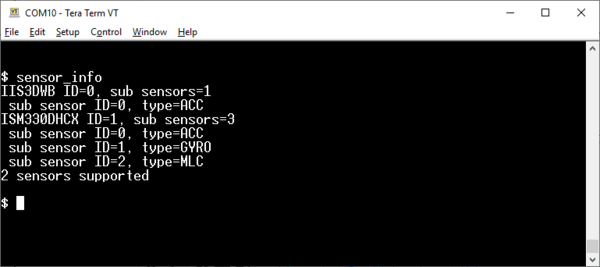

- List the supported sensors with the sensor_info command and collect their IDs using the command

$ sensor_infoas shown in the figure below.

- Select the desired sub-sensor, the accelerometer for instance, with the

sensor_setcommand:

$ sensor_set 0.0 enable 1 sensor 0.0: enable.enable

- You can further configure other parameters (Output Data Rate and full-scale settings) using sensor_set and sensor_get commands.

- Configure and run the device you want to monitor in normal operating conditions and start the data logging.

- Start data acquisition with the start datalog command

$ start datalog

- Stop data acquisition when enough data is collected with the stop command ( or even more conveniently with hitting the ESC key )

$ stop

- Configure the monitored device for abnormal operation, start it .and repeat steps 6. and 7. During each data acquisition cycle, a new folder is created with the name

STM32_DL_nnn. The suffixnnnincrements by one each time a new folder is created, starting from 001. This means that if noSTM32_DL_nnndirectory pre-exists, directoriesSTM32_DL_001andSTM32_DL_002are created for normal and abnormal data respectively. Each created directory contains two files:DeviceConfig.json: the configurations used for the sensors to acquire data.LSM6DSO.dat: the sensor acquisition data.

5.2. Exporting the data collection to NanoEdge™

FP-AI-NANOEDG1 comes with utility Python™ scripts located in Utilities\AI_ressources\DataLog to export data from the STWIN datalog application to the NanoEdge™ AI Studio format.

The script DataParser.py parses the .dat files in the folder provided and create .csv files. It requires the path to a data directory that contains all the .dat files named as sensor names, as well as DeviceConfig.json.

In FP-AI-NANOEDG1, the script is invoked as follows:

python STM_DataParser.py Sample-DataLogs/STM32_DL_001 python STM_DataParser.py Sample-DataLogs/STM32_DL_002

As a result, a .csv file is added to each directory.

These .csv files contain data for the user, in human-readable format, so they can be interpreted, cleaned, or plotted. Another step is needed to export them to NanoEdge™ AI Studio required format as explained in cartesiam-neai-docs.readthedocs-hosted.com/studio/studio.html#expected-file-format. This is done using script, PrepareNEAIData.py. The script converts the data stream into frames of equal length. The length, which depends on the use case and features to be extracted, must be given as a parameter along with the good and the bad data folders (respectively STM32_DL_001 and STM32_DL_002 in the example) as follows: python PrepareNEAIData.py Sample-DataLogs/STM32_DL_001 Sample-DataLogs/STM32_DL_002 -seqLength 1024 As a result, two .csv files are created:

- normalDataFull.csv

- abnormalDataFull.csv

NOTE: When multiple acquisitions are made for normal and abnormal behaviors of the machine, the user is expected to collect all the normal data files in a single folder and all the abnormal files in another folder. The script Prepare NEAIData.py can handle the multiple files and create a single .csv file for both normal and abnormal cases, irrespective of the number of .csv present in normal and abnormal data folders.

Detailed script usage is provided in a Jupyter™ notebook located in the datalog directory (Parser_for_HS_Logged_Data.ipynb). Samples of accelerometer data are provided in Utilities\AI_ressources\DataLog\Sample-DataLogs.

5.3. Installing the NanoEdge™ library

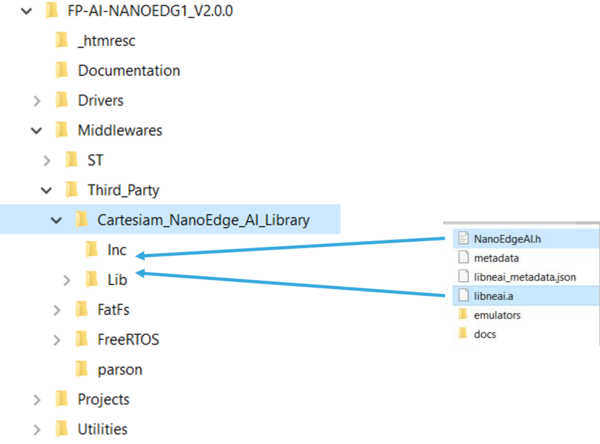

Once the libraries are generated and downloaded from NanoEdge™ AI Studio, the next step is to incorporate these libraries into FP-AI-NANOEDG1. The FP-AI-NANOEDG1 function pack comes with the library stubs replacing the actual libraries generated by NanoEdge™ AI Studio. This makes it easy for users to link the generated libraries and have a place holder for the libraries that are generated as described in Section 4.2 Exporting the data collection to NanoEdge. To link the actual libraries, the user must copy the generated libraries and replace the existing stub/dummy libraries and header files NanoEdgeAI.h, and libneai.a present in folders lib and bin respectively. The relative path of these folders is /FP_AI_NANOEDG1/Middlewares/Third_Party/Cartesiam_NanoEdge_AI_Library/ as shown in the figure below.

Once these files are copied, the project must be reconstructed and programmed on the sensor board to link the libraries. To perform these operations,

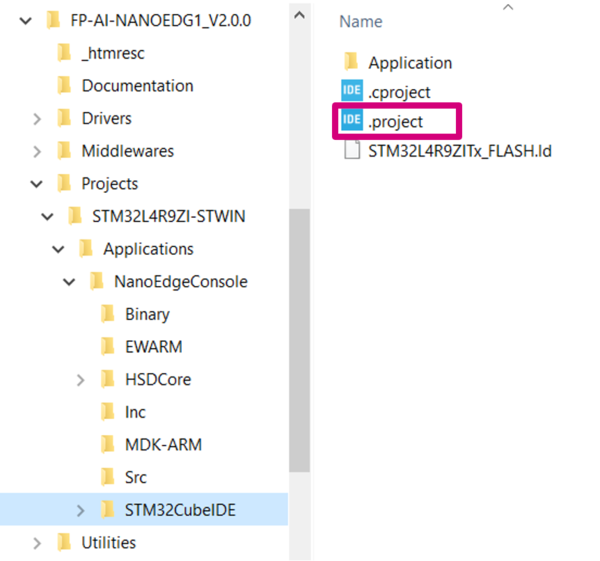

the user must open the .project file from the FP-AI-NANOEDG1 folder located at FP_AI_NANOEDG1/Projects/STM32L4R9ZI-STWIN/Applications/NanoEdgeConsole/STM32CubeIDE/ as shown in the figure below.

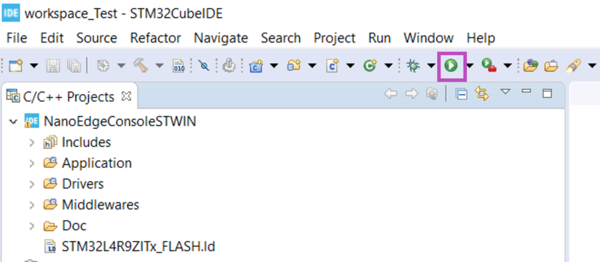

To install new firmware after linking the library, connect the sensor board, and rebuild the project using the play button highlighted in the following figure. Build and download the project in STM32CubeIDE.

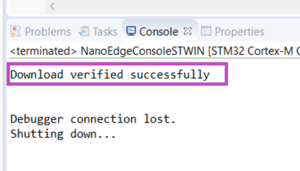

See the console for the outputs and wait for the build and download success message as shown in the following figure.

6. Building and programming

Once a new binary is generated through the build process with a Machine Learning library using NanoEdgeTM AI Studio, the user can install it on the sensor node and start testing it in real conditions. To must install and test it onto the target, the following steps are to be followed.

- Connect the STWIN board to the personal computer through an ST-LINK using a USB cable. This will make the STWIN board appear as a drive in the drive list of the computer, named something as

STLINK_V3M. - Drag and drop the newly generated

FP-AI-NANOEDGE-L4R9ZI-STWIN.binfile onto the driveSTLINK_V3M. - When the programming process is complete, connect the STWIN through the console and you should see a welcome message confirming that the stub has been replaced with the Cartesiam libraries as shown in the figure below.

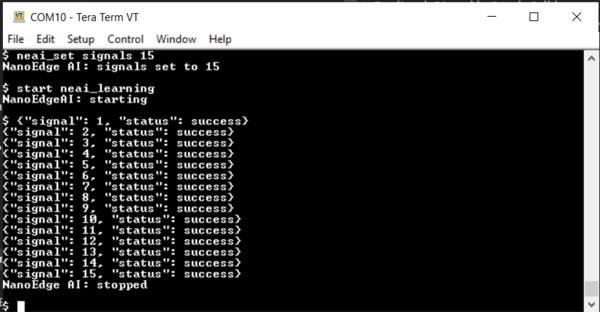

- To use these libraries for condition monitoring, the user must provide some learning data of the nominal condition. To do so, place the machine to be monitored in nominal conditions, and start the learning phase, either through the CLI console or by performing a long press on the user button, and observe the screen as shown in the following figure (if the console is attached), or the LED blinking pattern1 as indicated in the autonomous mode to show the learning has started.

The signals used for learning are counted. During the benchmark phase, a graph shows the number of signals to be used for learning in the form of minimum iterations. It is suggested to use between 3 to 10 times more signals than this number.

- Once the desired number of signals are learned, press the user button again to stop the process. The learning can also be stopped by pressing

Escbutton or simply by enteringstopcommand in the console. Upon stopping the phase, the console will show a message sayingNanoEdge AI: stopped. - The anomaly detection mode can be started either through the console or by performing a short press on the user button. In the detection mode, the NanoEdgeTM calculates the similarity between the observed signal and the nominal signals it has seen before. For normal conditions, the similarity is very high. However, for abnormal signals the similarity is low. If the similarity is low compared to a preset value, the signal is considered an anomaly, and the console shows the number of the bad signal along with the similarity calculated. However the similarity for the normal signals is not shown.

- The detect phase can be stopped by pressing the user button again if the sensor node is being used in autonomous mode. When the console is connected the detect phase can be stopped also by pressing

Escbutton on the keyboard or by enteringstopcommand in the console. Upon stopping console shows a message sayingNanoEdge AI: stopped.

Note: Some practical use cases (such as ukulele, coffee machine, and others) and tutorials are available from the Cartesiam website:

- cartesiam-neai-docs.readthedocs-hosted.com/tutorials/ukulele/ukulele.html

- cartesiam-neai-docs.readthedocs-hosted.com/tutorials/coffee/coffee.html

7. Resources

7.1. Getting started with FP-AI-NANOEDG1

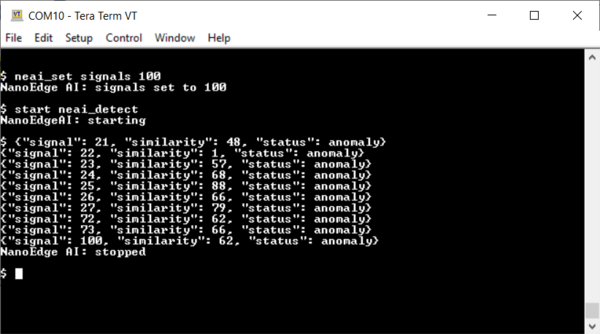

This is wiki page provides a quick starting guide for FP-AI-NANOEDG1 version 1.0 for STM32L562E-DK discovery kit board. Other than the interactive CLI interface provided in the current version of the function pack, version 1.0 also had a simple LCD support, which is very convenient for demonstration purposes when operating in button-operated or battery-powered settings. The following are a few images that show the working of FP-AI-NANOEDG1 on STM32L562E-DK discovery kit board for data logging, learning and detection phases.

Users are also invited to read the user manual of version 1.0 for more details on how to set up a condition monitoring application on STM32L562E-DK discovery kit board using FP-AI-NANOEDG1.

7.2. How to perform condition monitoring wiki

This user manual provided basic information to a user on how to use the FP-AI-NANOEDG1 V2.0. In addition to this, we also provide an application example of the FP-AI-NANOEDG1 on the STM32 platform where we perform the condition monitoring of a small motor provided in the kit GimBal motor. The How to perform condition monitoring on STM32 using FP-AI-NANOEDG1 provides a full example on how to collect the dataset for library generation with NanoEdge AI Studio using the data logging functionality, and then how to use the libraries to perform the unbalance detection for the motor.

7.3. Cartesiam resources

The function pack is to be powered by Cartesiam. The AI libraries to be used with the function pack are can be generated using NanoEdge AI studio. The brief details on generating the libraries are provided in the section above but the more detailed information on it and some example projects can be found in the documentation of NanoEdge AI studio.

- STEVAL-STWINKT1B

replace the link from STWIN1 to STWIN1B when available